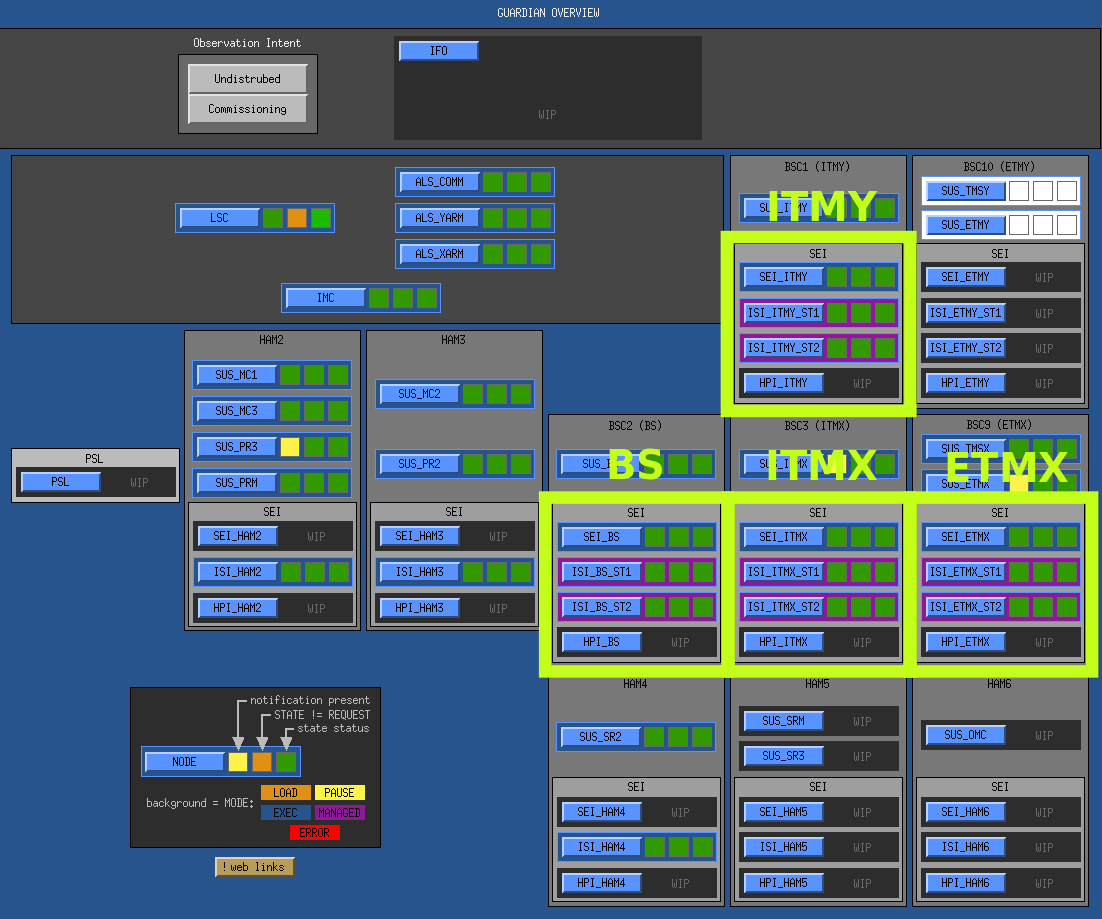

BSC ISI guardians now running for BS, ITMX, ITMY, ETMX

After a lot struggle with the BSC ISIs this week, we finally have all commissioned BSCs under guardian control.

The BSC seismic stack is one of the more complicated systems we've deployed, as it currently consists of three nodes in a "managed" configuration: two separate nodes for each of the two ISI stages (e.g. ISI_ITMY, and ISI_ITMY), and a "chamber manager" (SEI_ITMY) that coordinates the activity of the two subordinate ISI stages. USERAPPS location of the top level modules (and primary loaded library):

-

USERAPPS/isi/common/guardian/SEI_ITMY.py (USERAPPS/isi/common/guardian/isiguardianlib/BSC_MANAGER) -

USERAPPS/isi/common/guardian/ISI_ITMY_ST1.py (USERAPPS/isi/common/guardian/isiguardianlib/ISI_STAGE) -

USERAPPS/isi/common/guardian/ISI_ITMY_ST2.py (USERAPPS/isi/common/guardian/isiguardianlib/ISI_STAGE)

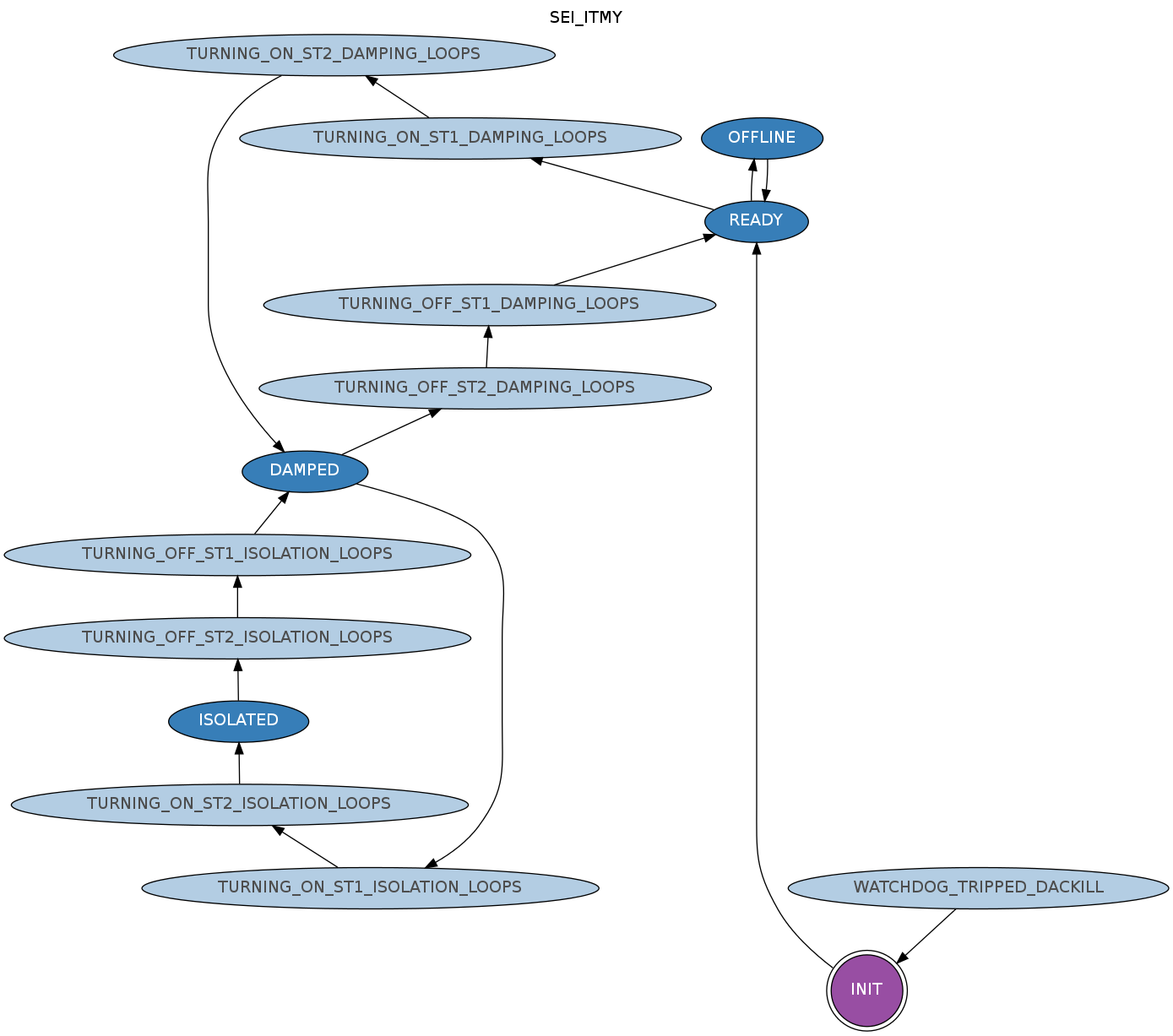

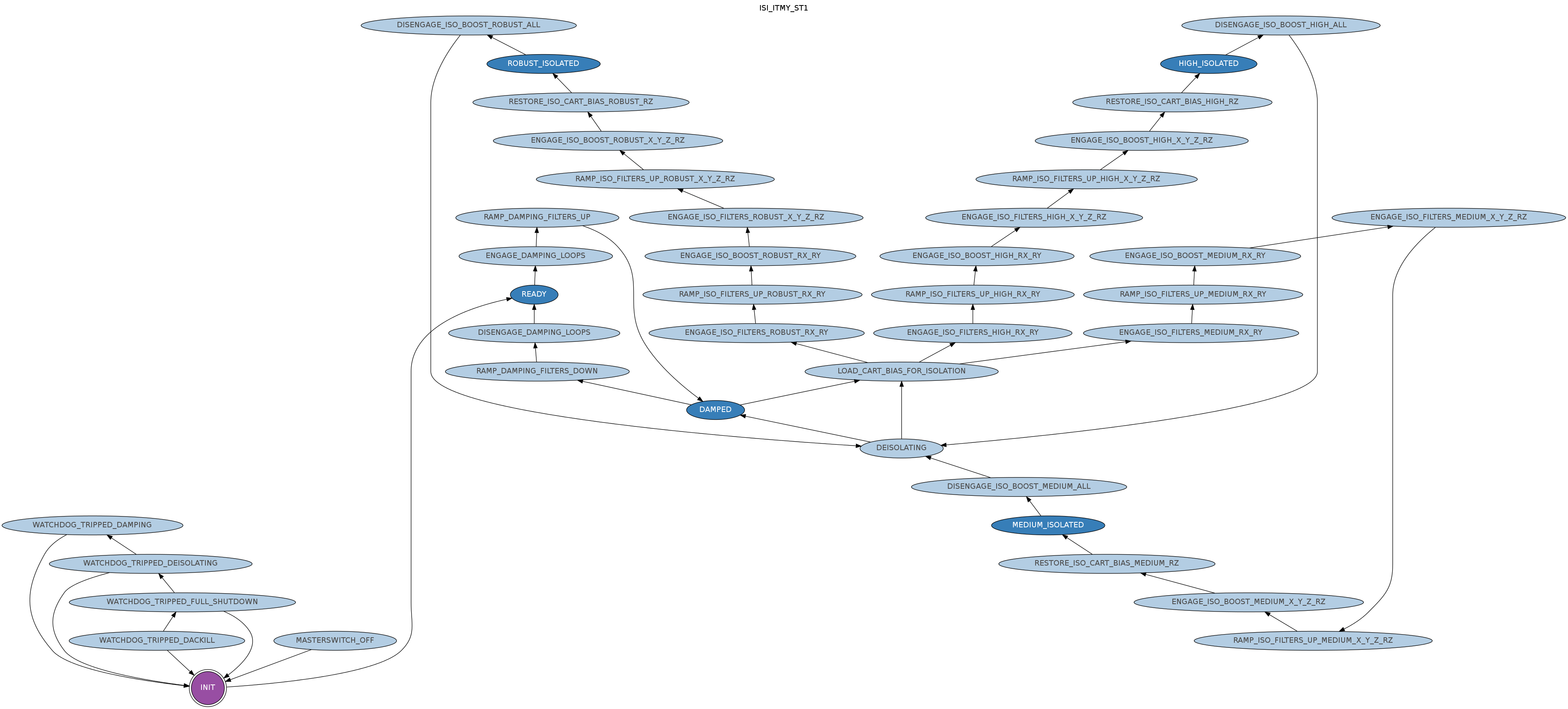

All single ISI stages share the exact same code, for each BSC stage as well the single HAM stage. Here are the system graphs for the SEI manager and ISI stage 1:

|

|

The manager (left above) has three main requestable states: READY, DAMPED, ISOLATED. The READY state puts both of the ISI stages in READY, the DAMPED state puts them both in DAMPED, and the ISOLATED state puts both into isolation levels that can be specified in the manager system description module (USERAPPS/isi/common/guardian/SEI_*.py). At the moment all systems are using their default configuration, which is to operate the ISI stages in the HIGH_ISOLATED state, which corresponds to "lvl3" from the old "command" scripts. This can't currently be changed on an individual BSC basis, due to a guardian core issue, but I'm working on it.

The degrees of freedom for which to restore cart bias offsets can also be specified on a per stage basis. Note the ISI_ITMY_ST1 graph above has "RESTORE_ISO_CART_BIAS_*_RZ" states, and no corresponding states for the other degrees of freedom (X, Y, Z, RX, RY). This indicates that we are restoring only RZ target cart bias offsets for this stage.

Notes about the BSC isolation procedure

There are a couple of important things to note about the current isolation procedure:

We had a lot of trouble dealing with the T240s in stage 1. Their exterme sensitivity to pitch causes them to saturate if a large pitch cart bias offset is applied to the stage. We there spend a lot of time trying to figure out how to gracefully bring them in and out of the loop, by reducing their gain and/or changing the blend filters during the isolation procedure. All of theses things has problems, though:

- switching of the T240 analog gain does not synchronously switch the corresponding digital compensation filters. This means that any attempt to change the T240 gains will cause the T240 watchdogs to trip.

- there were strange problems with changing the blend filter with the isolation loops closed. The "SWITCH_ALL" buttons cause large glitches in the isolation loops. Clicking each DoF manually doesn't cause glitches, but clicking them too fast causes various things to saturate. Basically you have to manually switch each gain independently, with a significant delay between each DoF.

This makes things very difficult if we need to hold pitch offsets on any of the ISIs. It looks like we need to fix the synchronous switching of the T240 compensation filters before we can handle pitch cart bias offsets.

However, after speaking with the integration team, it seems that it's ok to operate temporarily in a mode where we only restore RZ cart biases. Any needed pitch offsets can be held in the suspensions for now. Therefore we do not need to change the T240 gains or blend filters during the isolation procedure. This is good news, since it means we can run with the current guardian behavior. Obviously this will have to be fixed down the line, as we will likely want to hold pitch offsets on the ETMs, but we have a workable solution for the moment.

Guardian BSC isolation configuration and procedure

- All sensors are configured to be in HIGH gain mode. This includes the T240s and L4Cs on stage 1, and the GS13s on stage 2

- Both stage 1 and stage 2 BLEND filters are configured to be in "Tcrappy" for all DOFs at all times. "Tcrappy" are the low-frequency cross over blends that include the T240s on stage 1. There is no changing of the blend filters at any point during the isolation procedure.

- All cartesian biases for all DoFs are restored to their "equilibrium" positions at the beginning of the isolation procedure.

- Only the RZ degree of freedom cart bias target is restored at the end of the isolation procedure. The rest of the DoFs are left at their equilibrium position.

- Both ISI stages are configured to go to HIGH_ISOLATED (i.e. "level 3").

-

Isolation procedure:

- stage 1: DAMP

- stage 2: DAMP

- stage 1: equilibrium cart bias

- stage 1: HIGH ISOLATE

- stage 1: restore RZ cart bias

- stage 2: equilibrium cart bias

- stage 2: HIGH ISOLATE

- stage 2: restore RZ cart bias

With the above configuration we can successfully isolate the BSCs without touching the T240s or blend filters. It seems to be fairly robust.

Important operator notes:

- We don't yet have guardians for HPI. This means that restoration from HPI watchdog trips require a bit of care. If the ISI watchdogs are reset before the HPI, the SEI manager will restore isolation on the ISI before the HPI is restored. This means that the ISIs will trip during the HPI isolation. It is therefore important remember to reset HPI watchdogs and restore HPI isolation before resetting the ISI watchdogs.

- It's possible that depending on the seismic environment, etc., the ISI could trip during the isolation procedure. We have noticed, for instance, that some of the sensors might saturate during isolation if things are noisy. Don't give up on guardian if this happens. Just reset the watchdogs and let guardian try to isolate again. It will likely work during the next attempt. If it still doesn't, try waiting until things settle down a bit before resetting the watchdogs.

-

If all else fails, the guardians can be either set to PAUSE (so they stop executing code) or can be shut off entirely (e.g. "

guardctrl stop SEI_ITMY ISI_ITMY_ST1 ISI_ITMY_ST2"), at which point all the old command scripts can be used. This should obviously be done only as a very last resort.

Notes about guardian SEI manager behavior

The SEI managers represent a new step for guardian, as their primary task is to control the state of other guardian nodes, which we haven't needed up to this point. Getting their behavior robust has been quite tricky. It necessitated a lot of work on the guardian built-in "manager" library, as well as a lot of experimentation with the SEI manager module itself. The manager is designed to be fairly robust against commissioner noodling with it's subordinates, but that is also fairly tricky to get right in a robust and useful way. Some things will need to be improved, but this is the behavior currently:

- The manager puts its subordinate ISI nodes into MANAGED mode during the INIT state. This slightly modifies the graph traversal algorithm of the subordinate to defer to the manager after "jump" state transition. The subordinate node MEDM control screens also turns purple in MANAGED mode as an indicator to the operator that the node is under the control of another guardian node.

- The INIT state now has a special feature that it can be jumped to directly from any state in the graph, after which the overall system REQUEST will be reset to whatever it was previously. The SEI manager INIT state is programmed to set all subordinates to MANAGED, identify the current state of both ISI stages, reset things as needed, jump to the current state of the system, then proceed to the request. Therefore, regardless of the state of the subordinates, requesting INIT from the manager should completely reset the state of the SEI BSC.

- The manager expects its subordinates to be alive. If they're not, the manager will go into ERROR. Once all subordinates are alive, clear the error (mode => LOAD) and re-exec the manager and it will pick up the subordinates. You may need to move to request INIT in the manager to fully reset the the system.

- If a subordinate REQUEST is changed while in MANAGED mode, the manager will throw a notification and will immediately reissue it's old request. The subordinate will have already started moving to the rouge requested state, but will immediately move to back to the manager requested state once it gets there.

- If a subordinate is removed from MANAGED mode, the manager will throw a notification, but will keep running as normal. The manager will continue to monitor the state of the now unmanaged subordinate but will stop issuing new commands to the subordinate. Since the manager will continue to process normally, it will therefore expect the subordinate to change it's state on its own. The new human manager will therefore need to take over this task, as the manager will otherwise not see it's states completed.

While things seem to working well at the moment, I certainly don't claim that all the bugs have excised. If the manager does get hung in some state, resetting to the INIT state should clear everything, reset the ISIs, and get things back on track.

TODO for SEI guardian

-

turn on guardian nodes for ETMX once it's ready, e.g.:

guardctrl start ISI_ETMX_ST{1,2} && guardctrl start SEI_ETMX (should then remove the "WIP" masks over the BSC ETMX SEI and ISI guardian boxes in the GUARD_OVERVIEW screen). - fix synchronous gain switching for the T240s

- figure out how to handle T240s with pitch cart biases (either via T240 gain switching and/or blend filter switching)

- HPI guardians, for both HAMs and BSCs

- incorporate HPI nodes under SEI managers for HAMs and BSCs, for full SEI stack control

- allow specifying non-default isolation levels for ISI stages (probably something in guardian core needs to be fixed)

- further development of manager infrastructure

Note, the following code version number are applicable to the above configuration:

- guardian: svn r878

- cdsutils: svn r211

- USERAPPS/isi/common/guardian: svn r7619