Jeff, Oli

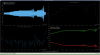

Back in 85674 we updated the model paramenter set for the BBSS, bbssopt.m, to better line up with what we were seeing in our measurements at LHO X1 and LLO X2. We ended up adjusting the value of (physical) d4 down by 1mm, so the original FDR physical d4 value of 2.6775 mm was changed in the model to a value of 1.6775mm.

After confirming that LHO's physical d4 value on the dummy mass is currently actually measured to be 2.73mm above the dummy centerline (87767), and that LLO's physical d4 value on the dummy mass is currently measured to be 2.5715mm above the centerline. In 87781 I showed that a +/- 3mm error in the primary prism's location compared to the original FDR-designated physical d4 location would only shift the frequency of that peak by +/- 0.1 Hz, so we don't need to worry about anything with the placement of the primary prisms.

As Jeff said in an email:

"The plots [in

87781] (and specifically pages 1&7 for longitudinal and 5 & 11) show:

(1) where the value of d4 ends up — even +/- 3.0 [mm] from the FDR +4.0 [mm] effective d4 or +2.67 [mm] physical d4 only moves the first pitch mode up or down by 0.1 Hz. We’re talking about only a +/-1 [mm] range around the FDR value.

- Unimpactful for seismic isolation

- Unimpactful for ISC control, and

- Easily accounted for in the top mass damping loop design.

(2) The data that drove us to *think* that (i.e. fit) the physical d4 of 1.67 [mm] on the dummy masses was “in between” a physical d of 1.67 [mm] and 2.67 [mm]. This was *the only* reason 1.67 [mm] was ever brought up instead of 2.67 [mm].

- There was tons of confusion early on about d4, given

:: the confusion about physical vs. effective ds (hopefully now cleared up) and

:: the error in dummy mass being installed / built upside down, and

:: the site-to-site confusion about what to measure,

but all of that is resolved now, and we’re confident that both dummy masses were built “correctly” and the install teams measure, consistently, on the dummy metal build, a physical d4 of 2.67 [mm].

In conclusion — the jig is designed to land the BBSS M3 stage prism on the real optic at a physical d4 of +2.67 [mm], or effective d4 of +4.0 [mm]. That is a totally fine value for d4, and is we’ll call it “ the correct” value, as that’s what the value was in the FDR."

Thus, we are going to revert the physical d4 value in our bbssopt.m model back to what it was originally, d4 = 2.6775 mm, since that's closer to the actual physical measurements that we are seeing, has a very small effect on the resonances, and was the original intended physical d4 value.

The bbssopt.m file has been updated in /ligo/svncommon/SusSVN/sus/trunk/Common/MatlabTools/TripleModel_Production/bbssopt.m, r12764. It has also been updated in T2000599 (v5).

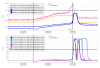

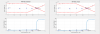

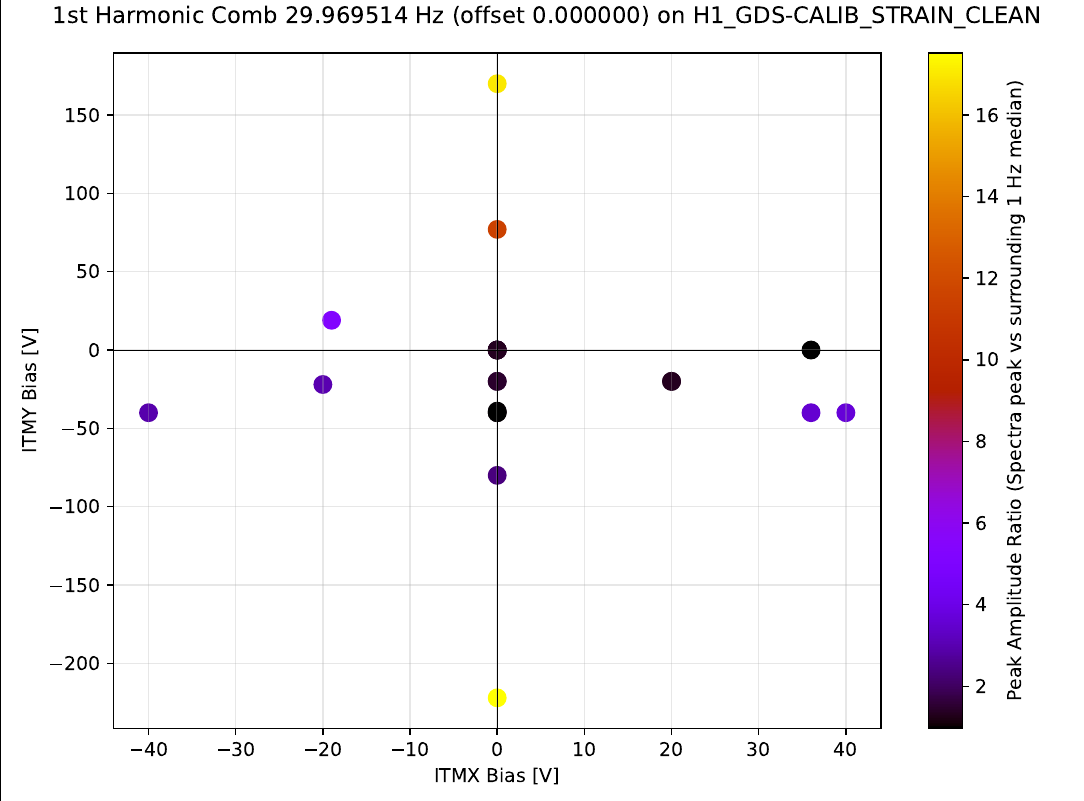

Although these results currently refer only to the first harmonic of each comb, this correlation already suggests a relationship between ITMY bias magnitude and the peak amplitude.

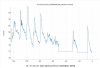

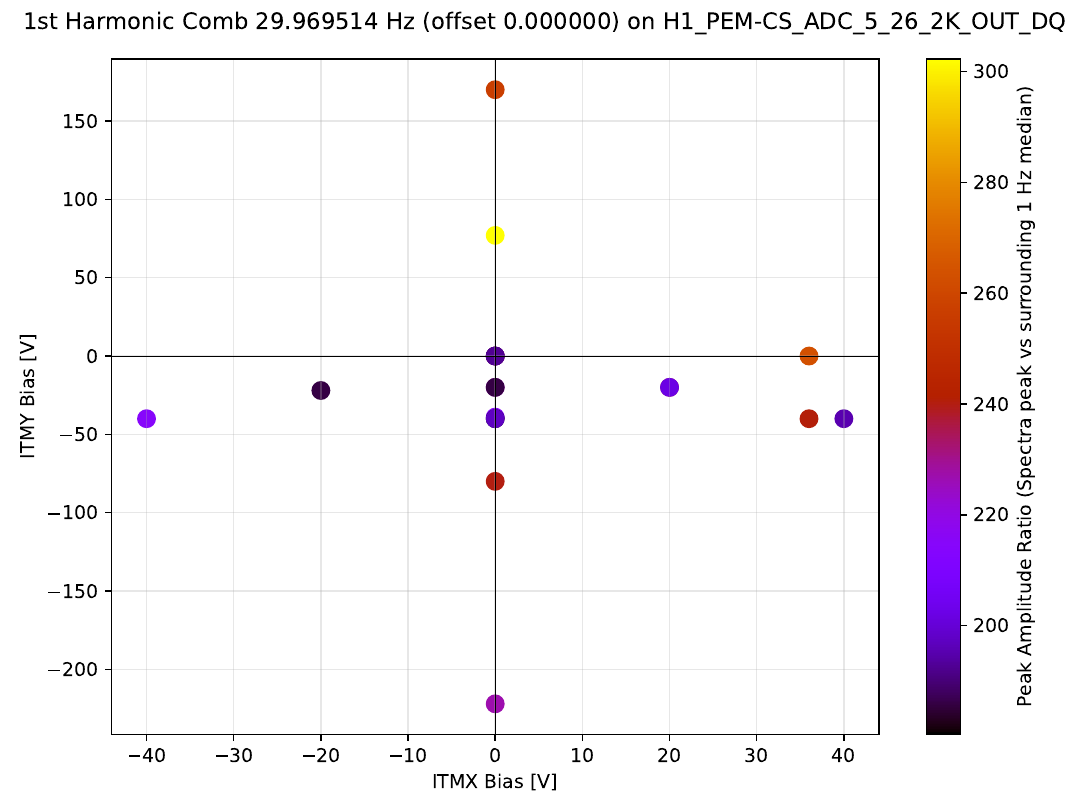

We also generated the same set of plots for the H1:PEM-CS_ADC_5_26_2K_OUT_DQ ADC channel, to test whether the observed trend in DARM is also visible in the ground current monitor. From these additional plots, the correlation appears less clear. For the near-30 Hz comb. It appears that changing ITM bias values does not change the comb amplitude in the ground monitor. Showing an example for the same comb as before:

Although these results currently refer only to the first harmonic of each comb, this correlation already suggests a relationship between ITMY bias magnitude and the peak amplitude.

We also generated the same set of plots for the H1:PEM-CS_ADC_5_26_2K_OUT_DQ ADC channel, to test whether the observed trend in DARM is also visible in the ground current monitor. From these additional plots, the correlation appears less clear. For the near-30 Hz comb. It appears that changing ITM bias values does not change the comb amplitude in the ground monitor. Showing an example for the same comb as before:

The current analysis only considers a single harmonic per comb. The next steps are to extend the study to higher harmonics of these combs and check whether the same behavior is observed in other comb families beyond the 30 Hz and 100 Hz series.

The current analysis only considers a single harmonic per comb. The next steps are to extend the study to higher harmonics of these combs and check whether the same behavior is observed in other comb families beyond the 30 Hz and 100 Hz series.