TITLE: 06/18 Eve Shift: 2330-0500 UTC (1630-2200 PST), all times posted in UTC

STATE of H1: Observing at 145Mpc

INCOMING OPERATOR: Tony

SHIFT SUMMARY:

Currently Observing at 145 Mpc and have been Locked for almost 2 hours.Everything looking good.

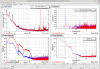

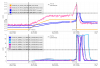

Two hours into our previous lock, our range was slowly dropping and we could see that SQZ wasn't looking very good, and trending back the optic allignments Camilla saw that ZM4 and ZM6 weren't where they were supposed to be because the ASC hadn't been offloaded before all the updates earlier today(ndscope1). To fix this, we popped out of Observing and turned on the SQZ ASC for three minutes, which made the sqzing better(ndscope2)! This better sqzing has persisted to this next lock stretch.

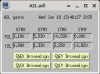

After the 01:30 lockloss (85145), I sat in DOWN for a couple minutes while restarting a few Guardian nodes since awg was restarted earlier today and this should help with the guardian node error (like in 85130) (these are the ones I could think of that probably all use awg): ALS_{X,Y}ARM, ESD_EXC_{I,E}TM{X,Y}, SUS_CHARGE, PEM_MAG_INJ Tagging Guardian

LOG:

23:30 Observing and have been Locked for over 1 hour

00:24 Popped out of Observing quickly to run the SQZ ASC for a few minutes

00:27 Back into Observing

01:30 Lockloss

- Sat in DOWN for a couple minutes while I restarted some Guardian nodes

- We ended up in CHECK_MICH_FRINGES but that didn't help, so I started an IA

03:05 NOMINAL_LOW_NOISE

03:08 Observing

| Start Time |

System |

Name |

Location |

Lazer_Haz |

Task |

Time End |

| 15:22 |

SAF |

LASER SAFE |

LVEA |

SAFE |

LVEA is LASER SAFE ദ്ദി( •_•) |

15:37 |

| 20:49 |

ISS |

Keita, Rahul |

Optics lab |

LOCAL |

ISS array work (Rahul out 23:45) |

00:28 |

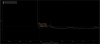

This lockloss could have been caused by some ring up that appears to be at a frequency between 11-13 Hz in the LSC loops.

Here is the lockloss tool link: https://ldas-jobs.ligo-wa.caltech.edu/~lockloss/index.cgi?event=1434317297

23:50 Observing