R. Short, J. Oberling, P. Thomas, J. Hanks

We restarted the PSL after yesterday's power outage. Some notes:

- The LVEA Control Box is on a bench-top power supply that had to be turned on manually, the system would not turn on until this was done

- The PSL restarted mostly without issue after that

- For some reason we lost our system calibrations file (power meters, PDs, etc.), it was using calibrations from November 2024, shortly after the final NPRO swap. More below.

- Communication between PSL Beckhoff and EPICS is still broken, Patrick and Jonathan are currently investigating

Once the PSL Beckhoff was restarted we noticed that the output power seemed unusually low, we traced this to the calibration settings being out of date. When running a newer version of the software we have to remember to grab the persistent settings file (port_851.bootdata from C:\TwinCAT\3.1\Boot\PLC), this holds all of the trip points, sensor calibrations, and a running tally of operating hours. Not sure why the system lost this information now, I don't think we've ever seen this happen with a system restart and I currently have no explanation. We were able to get the PD and LD monitor calibration settings from my alog from February when I changed the pump diode operating currents, and were able to grab the operating hours using ndscope (we looked at what they were reading when the power outage happened and set the operating hours back to that point + 1 hour (since the system had been running for ~1 hour at that point)). One thing to note, however, the persistent operating hour data is now completely wrong. The software tracks operating hours 2 ways: a user-updatable value and a locked value. The former allows us to change operating hours when we install a new component (like swapping chillers or installing a new NPRO/Amplifier after a failure), while the latter tracks total uptime of said components (i.e. total number of hours an NPRO, any NPRO, has been running in the system). It's these latter operating hours that are completely bogus, as we have no way to update these if the persisten settings file is lost (as I said, it's a locked value).

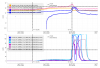

For future reference I've attached a screenshot of the current system settings table; the operating hours that are now wrong are in column labeled OPHRS A.