I'm starting to look at the nice data set Camilla took with different non linear gains in the interferometer, 83370. A few weeks ago we took a similar dataset on the homodyne, see 83040.

OPO threshold and NLG measurements by both methods now make sense:

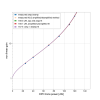

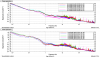

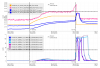

Based on the important realization in 83032 that we previously had pump depletion while we were measuring NLG by injecting a seed beam, we reduced the seed power for these two more recent datasets. This means that we can rely on the NLG measurements to fit the OPO threshold, and not have the OPO threshold as a free parameter in this dataset. (In the homodyne dataset, I had the OPO threshold as a free parameter, and it fit the NLG data well. With the IFO data, we want to fit more parameters so it's nice to be able to fit the threshold independently.). The first attachment shows a plot of nonlinear gain measured two ways, from Camilla's table in 83370. The first method of NLG calculation is the one we normally use at LHO, where we measure the amplified seed while scanning the seed PZT, and then block the green and scan the OPO to measure the unamplified seed level (blue dots in 1st attachment). The second method is to measure the amplified and deamplified seed while the seed PZT is scanning, (max and min), and nlg = {[1+sqrt(amplified./deamplified)]/2}^2 (orange pluses in attached plot). The fit amplified/ unamplified method gives a threshold of 158.1uW OPO transmitted power in this case, while the amplified/ deamplified method gives 157/5uW.

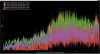

Mean squeezing lump and estimate of eta:

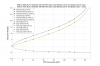

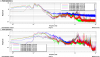

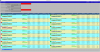

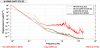

The second attachment here shows all the spectra that Camilla saved in 83370. As she mentioned there, there is something strange happening in the mean squeezing spectra from 300-400 Hz, which is probably due to the ADF at 322Hz was on while the LO loop was unlocked. In the future it would be nice to turn off the ADF when we do mean squeezing so that we don't see this. This could also be adding noise at low frequencies, making the mean squeezing measurement confusing.

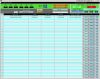

Mean squeezing is injecting squeezing with the LO loop unlocked, which means that it averages over the squeezing angles, and if we know the nonlinear gain (the generated squeezing) the mean squeezing level is determined only by the total efficiency. With x = sqrt(P/P_thresh)

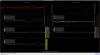

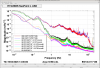

- opo_esc= 0.985

- SFIs = 0.99**3

- FC_WFS = 0.99

- ZM456 = 0.99

- OFI = 0.99*0.995

- SRC_loss = 0.99

- OM1 = 0.9993

- OM3 = 0.985

- OMC_QPD = 0.9904

- OMC = 0.956

- QE = 0.98