Stefan, Matt, Kiwamu,

This is a quick note. We started another TCS test in this afternoon with the new ring heater setting (RHX = 0.5 W and RHY = 1.5 W, 29557).

With a 20 W interferometer, the CO2 settings that balances the 45 MHz upper and lower sidebands were found to be at almost where we thought it should be. Then we increased the PSL power to 40 W to study the interferometer absorption as a function of the PSL power. With the sideband imbalance adjusted, the lock seems more stable at 40 W.

[good values that balances the 45 MHz sidebands]

-

At 20 W

-

[CO2X, CO2Y] = [650 mW, 400 mW]

-

At 40 W

-

[CO2X, CO2Y] = [650 mW, 400 mW]

-

[CO2X, CO2Y] = [500-600 mW, 200 mW]

-

[CO2X, CO2Y] = [400 mW, 0 mW]

These settings seem to give us a small imbalance within 10%. Also, lock acquisition was done with [CO2X, CO2Y] = [500 mW, 700 mW] in which I did not adjust the differential CO2 settings.

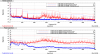

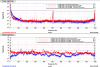

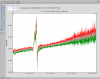

By the way, the attached trends show how the aberration changed when we went from 20 to 40 W. Notice that ITMY show relatively high aberration values (e.g. cylindrical, coma, spherical aberration) compared to the ones for ITMX. The last attachment is a StripTool showing the HWS outputs. A jump in the middle is due to us increasing the PSL from 20 to 40 W. After we sat on 40 W for approximately 1 hour, we started decreasing the common TCS and kept adjusting the differential CO2.

Filed FRS #6194 for the apparent power meter failure.