Related alogs 26264. 26245

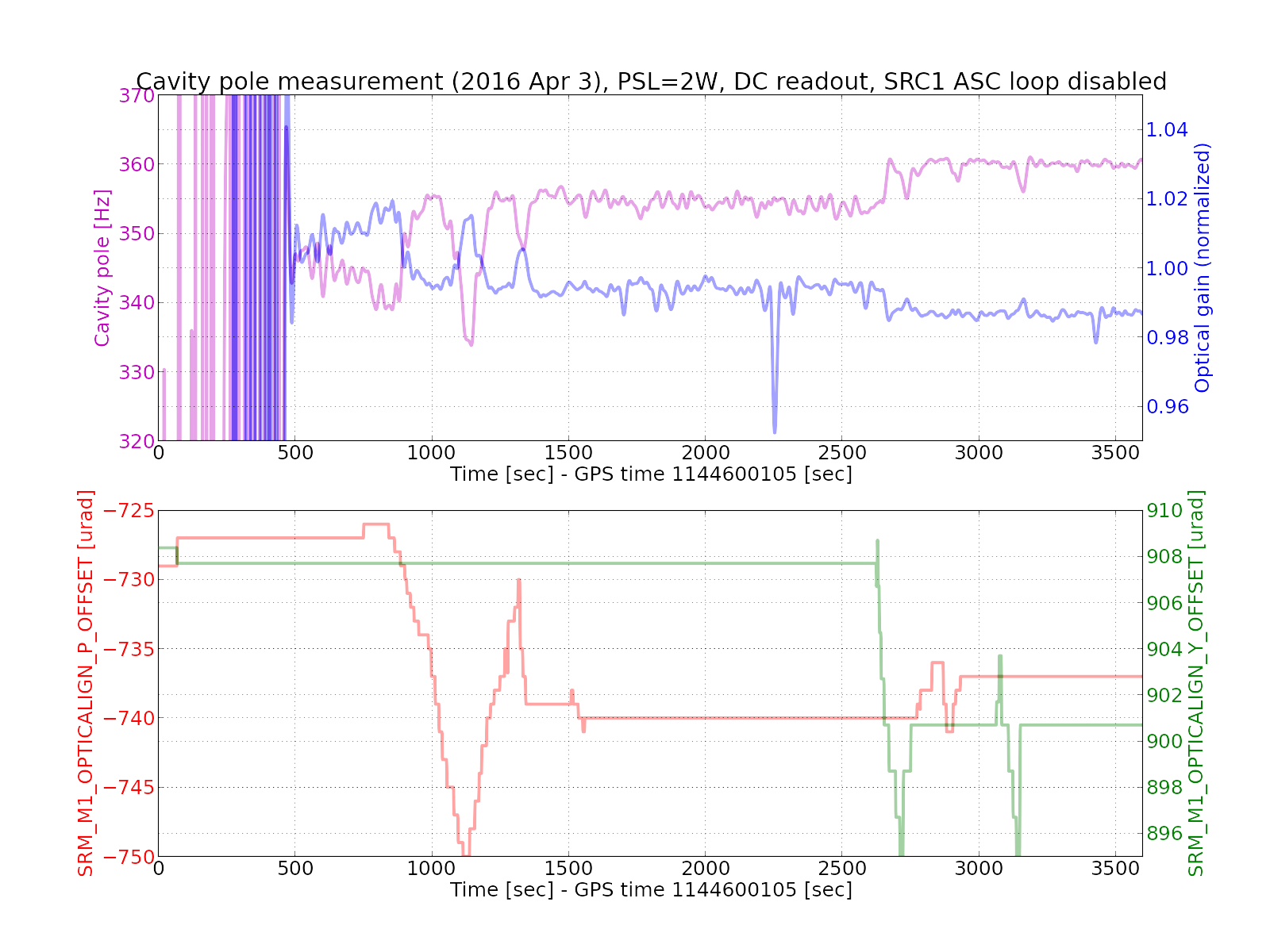

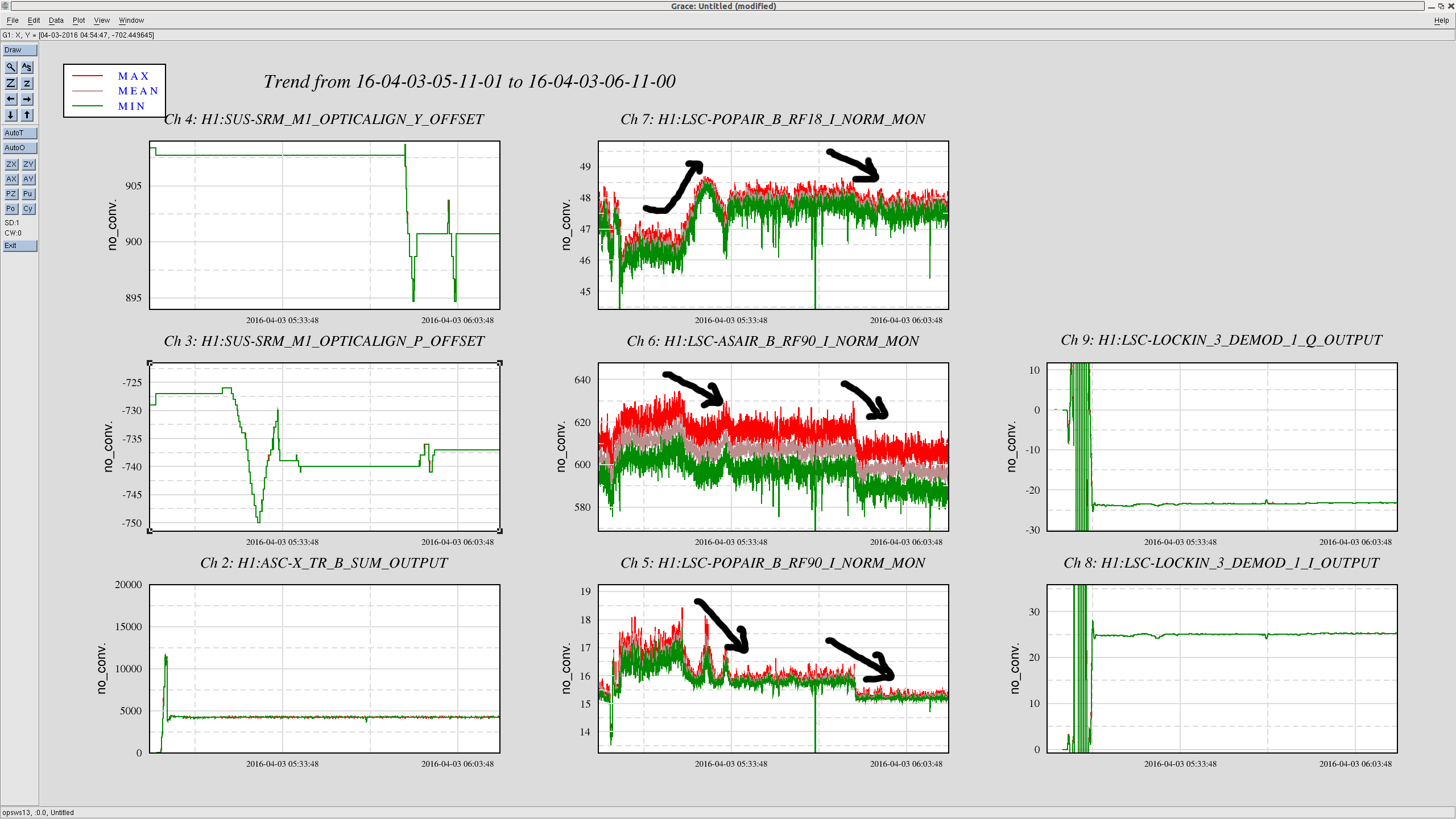

I did some follow-up tests today to understand the behavior of the DARM cavity pole. I put an offset in some ASC error points to see how they affect the DARM cavity pole without changing the CO2 settings.

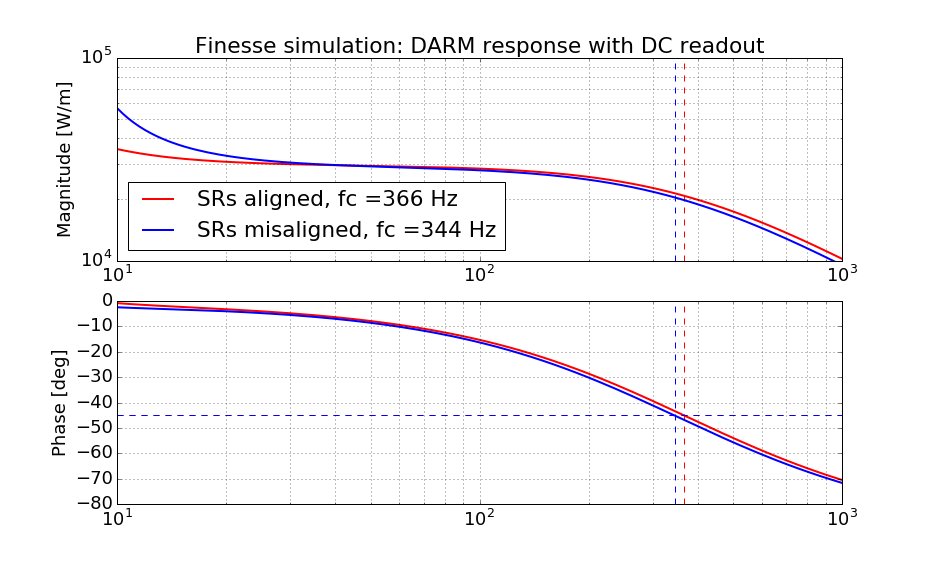

I conlude that the SRC1 ASC loop is nominally locked on a non-optimal point (when PSL is 2 W) and it can easily and drastically changes the cavity pole. The highest cavity pole I could get today was 362 +/- a few Hz by manually optimizing the SRC alignment.

[The tests]

This time I did not change the TCS CO2 settings at all. In order to make a fair comparison against the past TCS measurements (26264, 26245), I let the PSL stay at 2 W. The interferometer was fully locked with the DC readout, and the ASC loops were all engaged. The TCS settings are as follows, TCSX = 350 mW, TCSY = 100 mW. I put an offset in the error point of each of some ASC loops at a time. I did so for SRC1, SRC2, CSOFT, DSOFT and PRC1. Additionally, I have moved around IM3 and SR3 which were not under control of ASC. All the tests are for the PIT degrees of freedom and I did not do for the YAWs. During the tests, I had an excitation line on the ETMX suspension at 331.9 Hz with a notch in the DARM loop in order to monitor the cavity pole. Before any of the tests, the DARM cavity pole was confirmed to be at 338 Hz by running a Pcal swept sine measurement.

The results are summarized below:

-

SRC1, offset = from +400 to -300 cnts, cavity pole = from 350 Hz to 275 Hz

-

SRC2 , offset = from +0.2 to -0.2 cnts, no change

-

DSOFT, offset = from -0.03 to 0.03 cnts, no change in cavity pole, but a noticeable drop in the power recycling gain (41 --> 39).

-

CSOFT, offset = from -0.03 to 0.03 cnts, no change in cavity pole, but a noticeable drop in the power recycling gain (41 --> 38-ish).

-

PRC1, offset = from -0.1 to 0.1 cnts, no change in cavity pole, but a noticeable drop in the power recycling gain (41 --> 38-ish).

-

IM2, some large offset, no change in cavity pole.

-

SR3, offset = +/- 50 urad, no change in cavity pole or recycling gain.

The QPD loops -- namely CSOFT, DSOFT, PRC1 and SRC2 loops -- showed almost no impact on the cavity pole. The SOFTs and PRC1 tended to quickly degrade the power recycling gain rather than the cavity pole. I then further investigated SRC1 as written below.

[Optimizing SRC alignment]

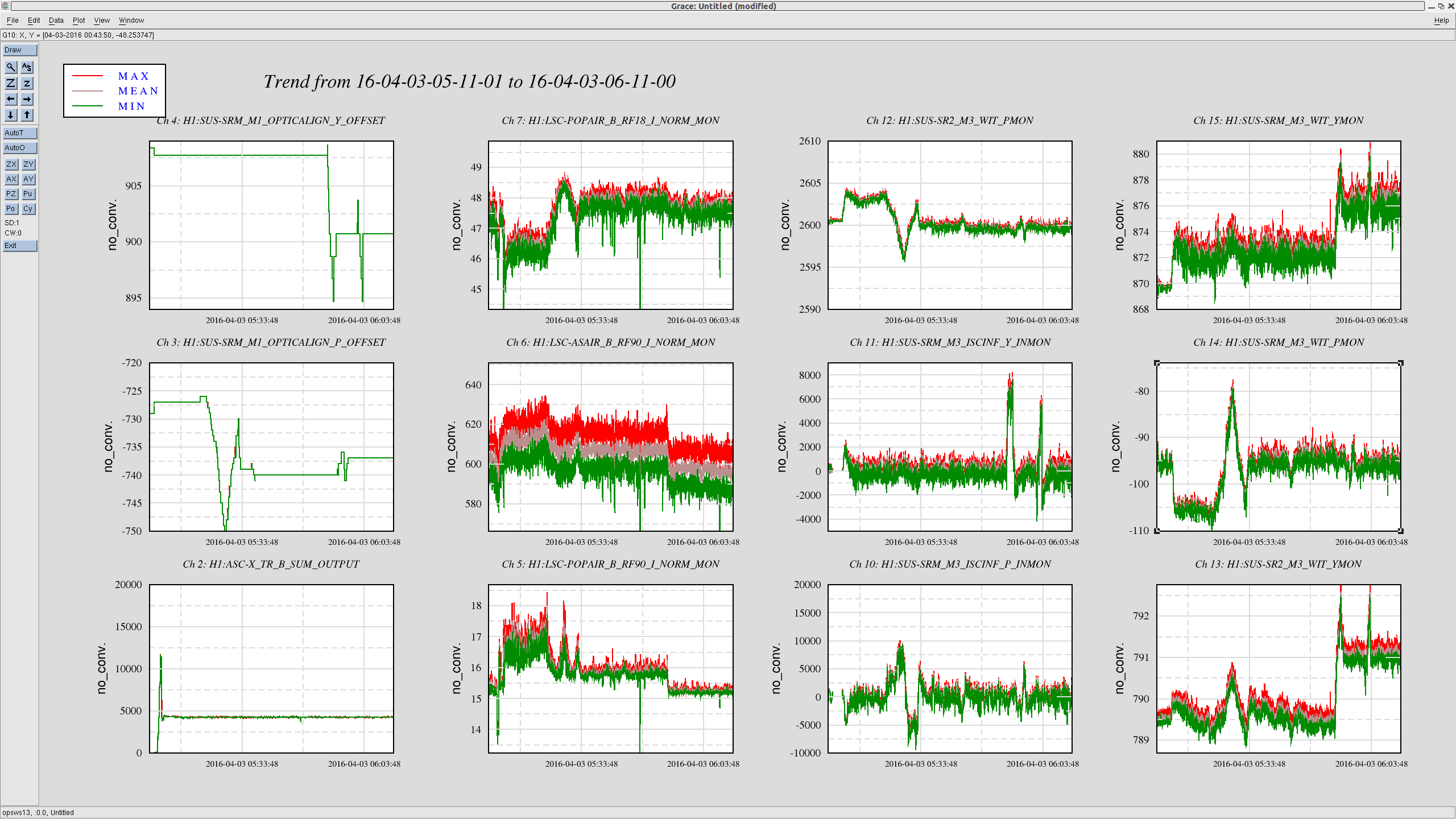

I then focused on SRC1 which controlled SRM using AS36. I switched off the SRC1 servo and started manually aligning it in order to maximize the cavity pole. By touching PIT and YAW by roughly 10 urads for both, I was able to reach a cavity pole of 362 Hz. As I aligned it by hand, I saw POP90 decreasing and POP18 increasing as expected -- these indicate a better alignment of SRC. However, strangely AS90 dropped a little bit by a few %. I don't know why. At the same time, I saw the fluctuation of POP90 became smaller on the StrioTool in the middle screen on control room's wall.

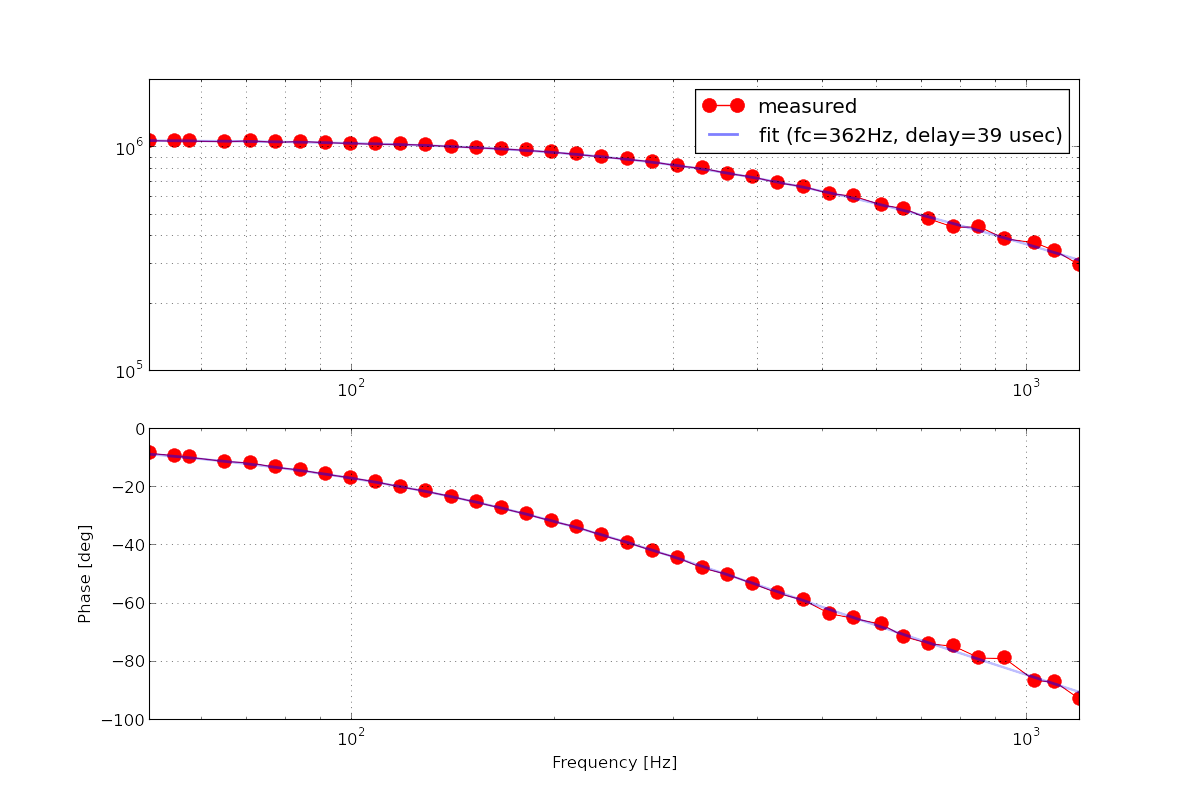

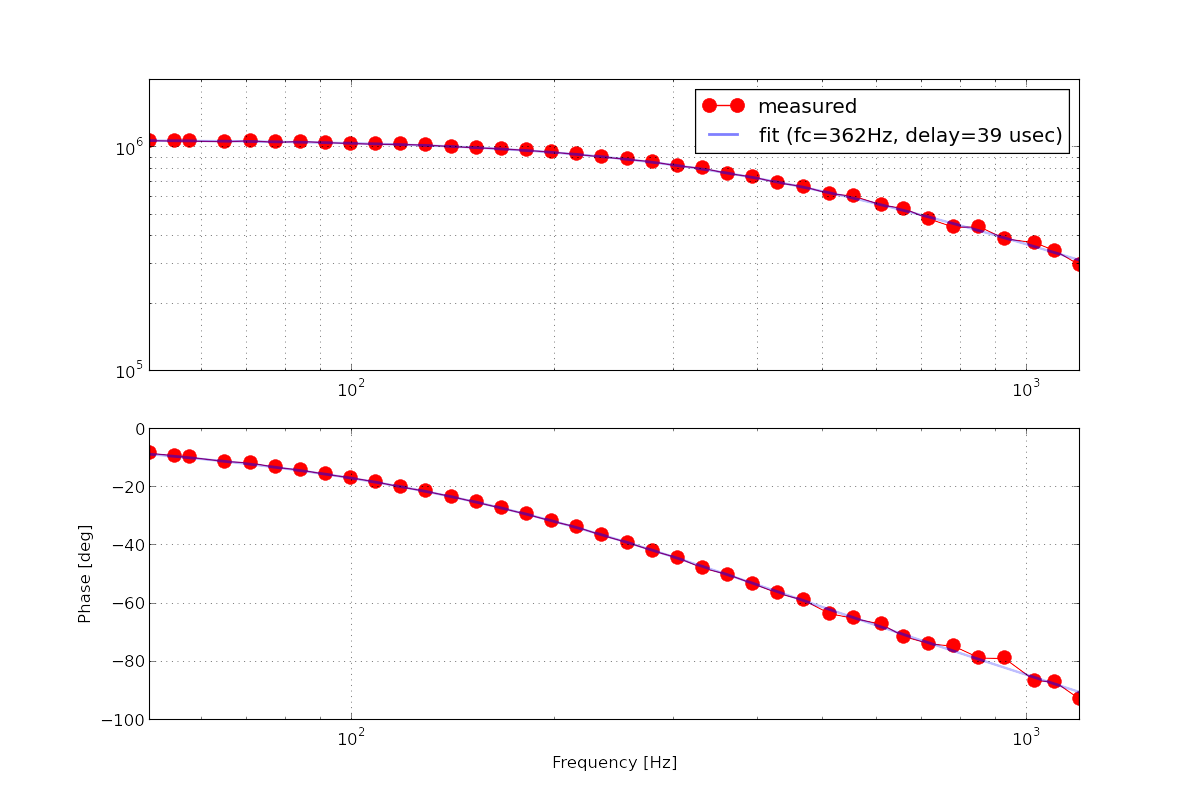

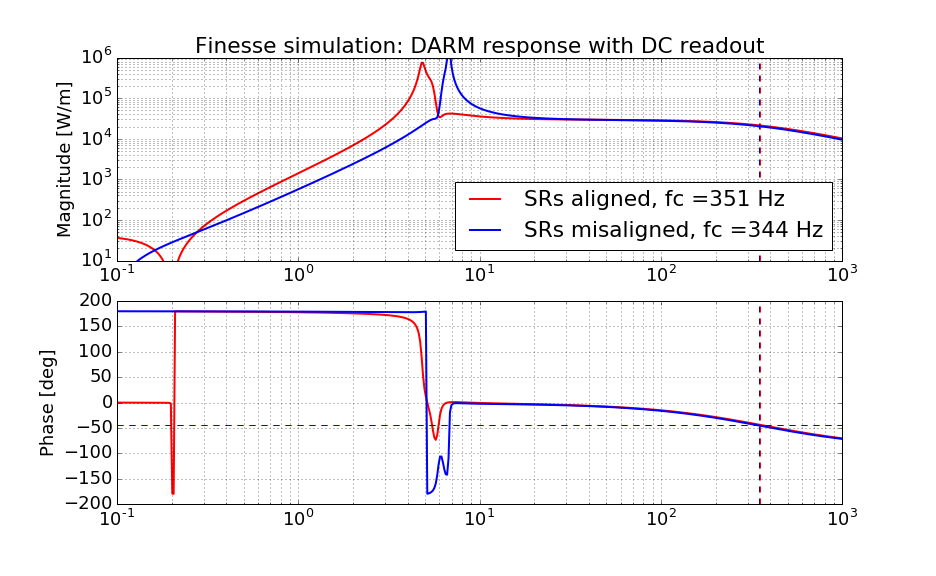

In order to double check the measured cavity pole from the excitation line, I ran another Pcal swept sine measurement. I confirmed that the DARM cavity pole was indeed at 362 Hz. The attached is the measured DARM sensing function with the loop suppression taken out. The unit of the magnitude is in [cnts @ DARM IN1 / meters]. I used liso to fit the measurement as usual using a weighted least square method.

By the way, in order to keep the cavity pole at its highest during the swept sine measurements, I servoed SRM to the manually adjusted operating point by running a hacky dither loop using awg, lockin demodulators and ezcaservos. I have used POP90 as a sensor signal for them. The two loops seemingly had ugf of about 0.1 Hz according to 1/e settling time. A screenshot of the dither loop setting is attached.

The rest of the medm screens are now done.