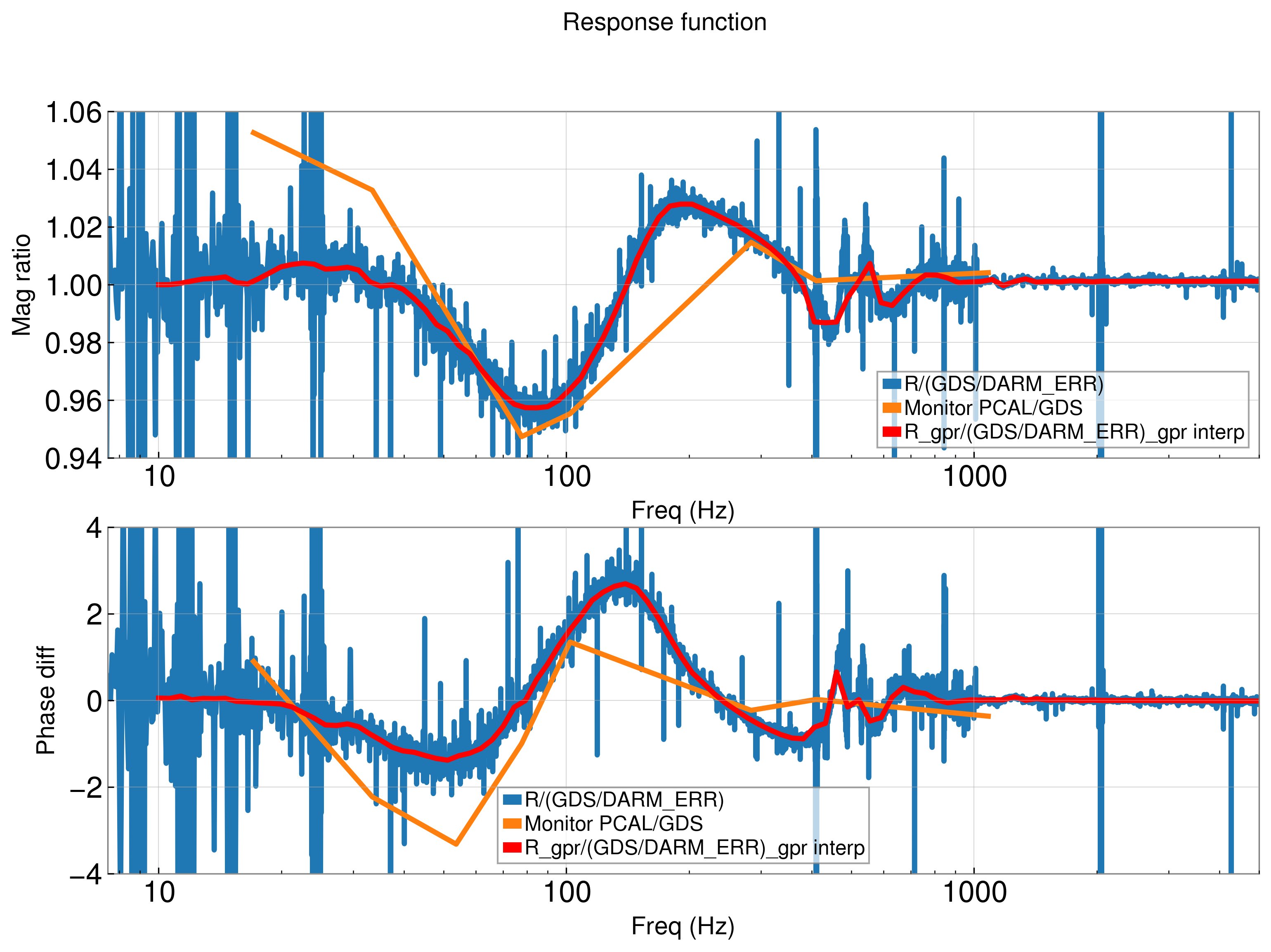

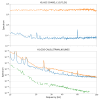

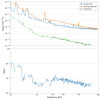

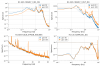

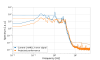

During the May 30th violin mode ring-up, the narrow line contamination in the frequency bands surrounding the fundamental and harmonic frequencies displays an asymmetry that is unexplained thus far. This contamination is most visible in the regions surrounding the 1500Hz harmonic with a 30Hz shift up in frequency as shown in figures 1 and 2. The shift increases with frequency as the contamination around the 500Hz fundamental is actually shifted 3Hz down in frequency, as shown in figure 3. Around 1000Hz, there is also a shift but it is only 17Hz shown in figure 4.

Similar behavior is also seen during the June 30th ring-up as shown in figures 5-8. The shift is in the opposite direction for this ring-up and it decreases with frequency, going from a 17Hz shift down at the 500Hz fundamental to a 3Hz shift down at the 1500Hz harmonic. Again, this behavior is unexplained.

These shifts are calculated as the difference between the median frequency of the lines in the 200Hz band surrounding the violin modes and the median frequency of the violin mode lines. Lines are considered violin mode lines if they have an amplitude that is 70% or more of the max violin mode amplitude in the band.