Marissa, Sidd

There is evidence that the current 20-50 Hz transients in the LHO data were present during the O3 as well. They were nearly not as ubiquitous as they are now, which can be explained by the increase in low frequency sensitivity in the current Observing run. However, I am not sure if the difference in low frequency sensitivity can completely explain the emergence of this noise, the existing noise coupling could also have gotten worse.

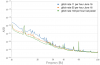

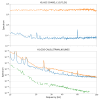

The first scan is from O3 and the second from O4. The separation between the blobs is similar (about 0.2 secs). A gravityspy search for fast scatter in O3b shows a whole lot of these transients.

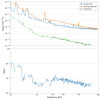

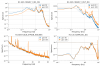

In the current Observing run as well, we have noticed that when the sensitivity gets better, the transient rate goes up. The third plot shows the glitch rate between June 1 and July 12. For the days after June 22, when the LHO powered down and the low frequency sensitivity improved as shown by the fourth plot, the glitch rate is higher.

There are times when within the same day, the change in sensitivity resulted in very different rate of transients. One such example is June 16 2023 (fifth plot). As seen from this plot, there are more glitches in the second red box compared to the first one. From the sixth ASD plot, we can see that as the DARM sensitivity got better on June 16, the transient rate went up. And it went further up after the power down (76 W to 60 W) as the low frequency sensitivity improved (green curve).

This analysis shows that some processes are continuously generating these transients and as the DARM sensitivity gets better, the rate of transients go up. We are looking for times when there is a change in the amplitude or frequency of this noise and correlate it with changes in different parts of the detector. We are more and more certain that the coupling does not depend on the ground motion, else we would have seen noise variations in the last two months of data.