Now that we're back to our lower noise situation with OM2 hot, we're redoing the test that Oli ran in 71304 to look at how much different our noise is with and without the calibration lines on. We expect that this will primarily show that the noise right around the line frequencies is reduced, as suggested by Gabriele in 71614. I don't think we expect any major changes other than right around the lines, but if we do, that would be very interesting.

Since the low frequency calibration lines are back on this week (alog 71706), it took more 'doing' than normal to turn off the calibration lines. ISC_LOCK's NLN_CAL_MEAS does not currently turn off the CAL_AWG_LINES guardian-controlled lines, so I selected LINES_OFF in that guardian. However, that didn't actually stop all of the lines, so I also did an awg clear 8 * to stop the lines going to the DARM1_EXC. TJ is looking into ensuring that NLN_CAL_MEAS takes care of the awg lines.

- A time while we were still in Observe (18:40:00 UTC): Ref 0, 140 avgs

- All the cal lines *actually* off starting by 19:07:45 UTC. Ref 1, 140 avgs

- Lines back on by 19:19:00 UTC. Had to select CAL_AWG_LINES to LINES_ON. Ref 2, 50 avgs

- Lines off by 19:30:00. This time the AWG_LINES successfully turned off all the lines (no need for an awg clear). Ref 3, 50 avgs

- Lines back on around 19:40:00, then handing off to Jim for other work.

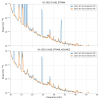

I'm a little surprised, but I'm not seeing much of a difference in the spectra when the lines are on vs. off. All 4 panels are the same 4 traces (noted above in the bullet points), but zoomed differently. These traces are all of the GDS-CALIB_STRAIN_NOLINES, so for times that the calibration lines are on, they've been subtracted out of the data here (note that the 'new' CAL_AWG_LINES are not yet subtracted, so those are still present in this channel). I expected this channel to show some noise around the calibration lines for times when the Cal lines were on. But, I'm not really seeing anything.

I'm not sure if the PCALY_DARM lines are coming back on at the same height each time or not. It's possible that they are, and it's just that the attached ndscope can only look at 16 Hz channels, and so the beating between lines / aliasing is causing it to look like they are not. But, just to flag that we should check to ensure that the lines are coming on with awg at the amplitude requested.

PCAL library part, before vs. after. I took the opportunity to re-organize other parts visually for clarity as well, but there's no functional change other than the 20 new oscillators. Also, as a minor simulink detail -- the individual OSC blocks *had* been a library part with-in a library part bug since the beginning of time (someone copied and pasted the block, but forgot the annoying feature of library sub-blocks that if you copy a block within a model that's already a library, it stays linked to the original thing you copied. One needs to explicitly "disable link" and "break link" if you don't want that sub-block to reference the other). Instead, I *actually* made a new library part, since I new I wanted to copy the same thing to the LSC model. Thus, the PCAL_MASTER.mdl library now relies on a new library part, /opt/rtcds/userapps/release/cal/common/models CAL_OSC_MASTER.mdl which is manifested in the PCAL model as the oscillators "PCALOSC1," "PCALOSC2," ... "PCALOSC30". 2023-07-26_PCAL_MASTER_PCAL_OSCfocus_before.png shows the impacted parts of the PCAL_MASTER.mdl library part before the changes. 2023-07-26_PCAL_MASTER_PCAL_OSCfocus_after.png shows the impacted PCAL_MASTER after the changes. 2023-07-26_CAL_OSC_MASTER.png shows the new CAL_OSC_MASTER library block, and 2023-07-26_CAL_OSC_MASTER_inside.png shows the innards.And here's the LSC block before and after the changes. Here, again, I took the opportunity to aesthetically clean up the garbled mess that was all the things that have been stapled on and around the DARM bank over the years. But, the only functional changes are (a) the new oscillators, (b) the move of the CAL_LINES summation point from into DARM_CTRL to into DARM_ERR, (c) the DARMOSC_SUM test point and epics monitor, and (d) the storage of DARM1_IN1 in the frames. The changes (b) and (d) are "interesting." For old iLIGO reasons that I don't remember, the "DARM" calibration lines were summed in down stream of the DARM bank, i.e. into DARM_CTRL. Even though the infrastructure is there (I personally installed it circa 2012-2013), we haven't used these DARM calibration lines *at all* in the advanced LIGO era. This is because we quickly realized that we need such a "CTRL" excitation at each stage of QUAD's DARM actuation if we want to track the actuation strength of each stage of the QUAD separately like we do now. Now, we want to re-invoke the "DARM" calibration lines to constantly measure the DARM open loop gain, G, or more specifically the loop suppression, 1/(1+G), so we can divide it *out* of an adjacent PCAL line measure of the response function, C/(1+G). But, as always, we need two test points surrounding it, the so-called "IN1" (just up stream of the excitation) and "IN2" (just down stream of the excitation) points. Of course, when measuring live, it doesn't matter where in the loop this trifecta of IN1+EXC=IN2 system of channels are; you'll get the same answer whether it's up or down stream of the DARM banks. BUT, while we already store DARM_ERR and DARM_OUT in the frames, which could both equally be the "IN1" channel, - there was no convenient test point to store after the DARM OUT, - I wanted the calibration lines to mimic the location of where the awg input DARM1_EXC was injected in the loop -- i.e. in between DARM1_IN1 and DARM1_IN2, and - I figure the DARM1_IN1 test point (which comes by default with the DARM1 standard filter module) is already there and has a more natural name. So, I moved the summation point. So, when we analyze these calibration lines offline, we'll be taking the transfer function between the following channels to get the following equivalent loop characterizations: H1:LSC-DARM_ERR_DQ / H1:LSC-DARM1_IN1_DQ == "IN1/IN2" == G H1:LSC-DARM1_IN1_DQ / H1:LSC-CAL_LINE_SUM_DQ == "IN2/EXC" = 1/(1 + G) H1:LSC-DARM_ERR_DQ / H1:LSC-CAL_LINE_SUM_DQ == "IN1/EXC" = G/(1 + G) To the ECR process!