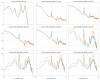

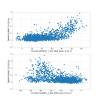

We have evidence that DARM noise in the 10-50 Hz region seems to be modulated by residual motion of DHARD_Y at 2.6 Hz.

At the same time, now DHARD_Y is not limiting DARM by linear coupling above 10 Hz, due to the improved A2L (-30 db)

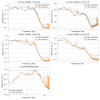

So there's room to test a new DHARD_Y controller that could give us 2x more suppression at 2.6 Hz, at the price of about 2x more noise above 10 Hz.

Attached a proposed new controller that could be tested to see if DARM stationarity improves.

I uploaded the new controller in FM1 as a multiplicative change to the previous controller. So one can turn on this 2.6 Hz boost by engaging FM1 (5 second ramp) together with the other FMs while locked.

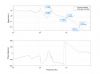

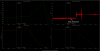

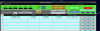

Tested on and off a couple of times while in NLN, the transition is smooth. The effect is shown in the attached spectrum:

- the 2.6 Hz peak is reduced as expected since there is more suppression

- the 1.4Hz peak is also reduced, as predicted by the closed-loop gain model since there is less gain peaking at that frequency

All in all the DHARD_Y RMS is reduced to 0.64 times the value with the old controller.

I left this controller active in Observing and Cory accepted the SDF. We should take a look at DARM in the next few hours to see if 1) there is less non-stationary noise in the 20-50 Hz region 2) the higher DHARD_Y control noise doesn't affect us.

I have updated ISC_LOCK to engage this controller when in LOWNOISE_ASC. I did not reload ISC_LOCK code

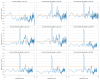

Attached is the FM1 diff from Gabriele's change this afternoon.

Gabriele has also made a change for this FM1 change in ISC_LOCK, line 4490. Next time H1 is OUT of OBSERVING, please hit LOAD on ISC_LOCK.

ISC_LOCK has been re-loaded.

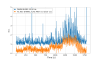

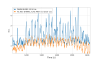

The new controller improved a lot the DHARD_Y motion at 1.3 Hz and 2.6 Hz, reducing the RMS.

It looks like CHARD_Y coudl use a similar improvement, since it still has a large 1 Hz and 2.6 Hz peaks. The 1 Hz peak is due to gain peaking in the current design, and the loop has little gain at 2.6 Hz

DARM still shows bicoherence with CHARD_Y