Lockloss at 00:41. Detector moved itself out of Observing and into Commissioning, and then immediately lost lock. Cause not yet known.

Lockloss at 00:41. Detector moved itself out of Observing and into Commissioning, and then immediately lost lock. Cause not yet known.

TITLE: 07/23 Eve Shift: 23:00-07:00 UTC (16:00-00:00 PST), all times posted in UTC

STATE of H1: Observing at 150Mpc

CURRENT ENVIRONMENT:

SEI_ENV state: CALM

Wind: 13mph Gusts, 8mph 5min avg

Primary useism: 0.02 μm/s

Secondary useism: 0.05 μm/s

QUICK SUMMARY:

Taking over from Corey, detector has been Locked for 7hrs 53mins and is in Observing.

TITLE: 07/23 Day Shift: 15:00-23:00 UTC (08:00-16:00 PST), all times posted in UTC

STATE of H1: Lock Acquisition

SHIFT SUMMARY:

LOG:

Lance, Genevieve and I used some of the time on Friday, when LLO was down for logging, to continue our search for scattering noise sources.

Projects and progress:

1) Identify frequency of the post-damping ITMX cryobaffle to compare to scattering frequencies in DARM. We were not successful in exciting the cryobaffle to produce noise in DARM. That probably means that it is not one of the scatter noise sources.

2) Search for source of 52 Hz and possibly related 48 and 38 Hz peaks. We did not convince ourselves that we were actually exciting the source of the 52 Hz peak. Search to continue.

3) Search for scattering at viewports using a guillotine retroreflector. In past runs we have used a guillotine with black glass to check viewports for scattering, watching DARM to see if noise is reduced when the black glass is inserted. This run we are using a retroreflector guillotine with the idea that if we increase noise dramatically by increasing the light returned to the scattering site, it will be easier to find than looking for slight improvements in DARM when we insert black glass. The retroreflector guillotine is made of retroreflector discs and tape (see figure) that I tested to make sure worked in near IR.

Genevieve and Lance noticed that they could make noise in DARM when the retroreflector guillotine was inserted in viewports on the -X side of HAM6. Full analysis of all of the data will begin this week.

Nice and quiet shift for H1 with a range hovering at 150Mpc. There was a small seismic spike in the last hour, but no issues for H1.

Sun Jul 23 10:05:09 2023 INFO: Fill completed in 5min 5secs

LLCV just got to 100% when overfill flow started.

Once the violins were damped and OMC Whitening completed, I took ISC_LOCK to MANAGED (by H1_MANAGER). But H1_MANAGER did not do anything as far as take H1 to NLN. I took it to INIT, but that did nothing since there is no path for it. I'm guessing there were some timers counting down?

At any rate, I was impatient to get back to OBSERVING, so I selected NLN on ISC_LOCK to get us back to OBSERVING.

Attached are the Guardians and their logs.

Since yesterday, I have noticed windows filling up the displays on the SQZ FOM nucs (see attached) along the lines of:

"Relogin or restarts required! Session running obsolete binaries or libraries as listed below. Please consider a relogin or restart of the affected processes! bash[12744419], medm[2592,1274430]"

These alert boxes are filling up the screens, so I am going to click/acknowledge them to restore visibility on these nucs.

(FRS Ticket 28611 submitted.)

TITLE: 07/23 Day Shift: 15:00-23:00 UTC (08:00-16:00 PST), all times posted in UTC

STATE of H1: Lock Acquisition

CURRENT ENVIRONMENT:

SEI_ENV state: CALM

Wind: 4mph Gusts, 2mph 5min avg

Primary useism: 0.01 μm/s

Secondary useism: 0.05 μm/s

QUICK SUMMARY:

Waiting waning minutes of ISC_LOCK as we wait for the violins to damp in OMC WHITENING after Ryan did the recovery from the morning lockloss (ETMx's MODE4 is the one resonance not at nominal gain yet...but it's close). Winds are lower than they were yesterday morning.

TITLE: 07/23 Owl Shift: 07:00-15:00 UTC (00:00-08:00 PST), all times posted in UTC

STATE of H1: Lock Acquisition

SHIFT SUMMARY:

LOG:

No log

DCPD saturation then lockloss, no obvious reason.

STATE of H1: Observing at 146Mpc

We've been locked for 9:20, no issues aside from another MY low temperature alarm.

Briefly dropped from Observing from 08:18:42 to 08:18:59 from an unknown issue, whatever SDF diff or grd node issue resolved itself before I could open the medms.

Using commands 'guardctrl log -a "07/23/2023 08:17 UTC" -b "07/23/2023 08:20 UTC" IFO' and 'guardctrl log -a "07/23/2023 08:17 UTC" -b "07/23/2023 08:20 UTC" DIAG_SDF' I can see that it was syscssqz that had 2 sdf diffs. Trended a in 71652 to see this was H1:SQZ-FIBR_SERVO_COMGAIN and H1:SQZ-FIBR_SERVO_FASTGAIN that changed from 20 to 17 and 15 back to 20 in ~0.3 seconds.

Oli also saw this 07/24 04:09UTC 71652, tagging SQZ, maybe we need to edit something or unmonitor these channels.

TITLE: 07/23 Owl Shift: 07:00-15:00 UTC (00:00-08:00 PST), all times posted in UTC

STATE of H1: Observing at 148Mpc

CURRENT ENVIRONMENT:

SEI_ENV state: CALM

Wind: 9mph Gusts, 7mph 5min avg

Primary useism: 0.01 μm/s

Secondary useism: 0.05 μm/s

QUICK SUMMARY:

Taking over from Oli, we've been locked for 5:16

CDS/Vac/SEI/SUS/Dust looks good

Dropped out of Observing for 17 seconds 08:18:42 to 08:18:59, not sure why. When I opened up SDF and GRD_OVERVIEW seconds after hearing verbal call out Commissioning I didn't see anything, it had already cleared itself.

TITLE: 07/23 Eve Shift: 23:00-07:00 UTC (16:00-00:00 PST), all times posted in UTC

STATE of H1: Observing at 143Mpc

SHIFT SUMMARY:

23:00 Detector relocking (71617)

23:03 Lost lock at TURN_ON_BS_STAGE2

23:11 Lost lock at AQUIRE_DRMI_1F

23:13 Lost lock at LOCKING_ALS

23:22 Lost lock at ENGAGE_DRMI_ASC

23:24 H1_MANAGER decided to start INITIAL_ALIGNMENT

23:42 Disabled H1_MANAGER while in INITIAL_ALIGNMENT (paused 71619) bc it was stuck at AQUIRE_SRY in ALIGN_IFO - we are skipping initial alignment and going stright to trying to lock again

~00:30 Dustmon in PSL reached 230 counts for particles >300nm. Going back down now.

00:49 Entered OMC_WHITENING, damping violins

1:45 Entered NOMINAL_LOW_NOISE

- SDF Diffs ACCEPTED for SUS-ITMX_L2_DRIVEALIGN_P2L_SPOT_GAIN and SUS-ETMY_L2_DRIVEALIGN_P2L_SPOT_GAIN (71618)

1:49 Entered Observing

6:35:02 Pushed out of Observing and into Commissioning - not sure why

6:35:28 I put us back into Observing with no issue

LOG:

| Start Time | System | Name | Location | Lazer_Haz | Task | Time End |

|---|---|---|---|---|---|---|

| 03:01 | VAC | Gerardo | CP1 | n | Check thermocouples | 03:07 |

Lockloss from NLN @ 22:38 7/22 - Not sure of cause yet, but winds were very high.

While trying to relock, we lost lock at:

presumeably all from the wind.

Got back into Observing at 1:49 after spending 1 hour in OMC_WHITENING damping violins.

SDF Diffs ACCEPTED for SUS-ITMX_L2_DRIVEALIGN_P2L_SPOT_GAIN and SUS-ETMY_L2_DRIVEALIGN_P2L_SPOT_GAIN as requested by Jenne (71596)

After Gabriele made a major improvement on the DHARD Y coupling (alog 71588), since LLO was still working on relocking, I checked the A2L gains for the 3 test masses that we use for ADS during locking. The summary is that the change seemed to be small enough that it's not worth keeping long term, so I have not modified ISC_LOCK. I did accept the changed A2L gains for this lock, so next lock, the operator will see SDF diffs and need to accept them.

Rather than being fancy and using the ASC as a metric for when the decoupling is good, I used 'the old way' of dithering the suspensions using the ADS and changed the A2L gains to zero the error signals. (To see the ADS error signals I had to put the gains H1:ASC-ADS_[PIT,YAW][3,4,5]_DEMOD_I_GAIN to 1, because they are set to zero by the camera servo guardian).

The first attachment is how the ADS error signals looked before I made any changes to the A2L gains for EX, EY, or IX. This is after Gabriele adjusted IY in alog 71588.

The second attachment is after I adjusted two of the P2L gains (the only two I ended up changing), to get the ADS error signals closer to zero.

The third attachment is the SDFs that I saved in the Observe.snap, that I did not put into lscparams/ISC_LOCK, so will need to be re-accpeted next lock at the values that guardian will set them to.

The fourth attachment is a comparison of the spectra (Brown before I changed any A2Ls, Red after), where I don't see much of a difference, but maybe Brown is better-ish anyway, which is why I'm planning to leave the A2Ls alone in the guardian. But, we'll be able to see throughout this lock whether there is a change worth making.

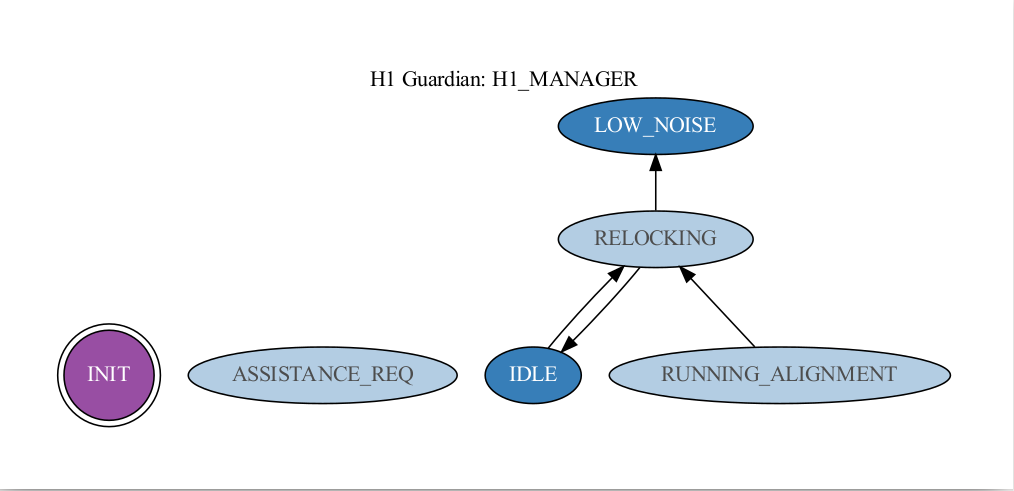

I'd like to start running the H1_MANAGER for the next few days in a testing phase. We can watch it and see what it does, then make tweaks as needed. The idea behind this node is to determine whether or not an initial alignment needs to be run and to flag for help when a human should intervene. Here's a quick rundown on how it works:

There are three main states that users will select: LOW_NOISE, IDLE, INIT.

To Engage the node - Select LOW_NOISE, then click INIT. It should determine if we are relocking, running an alignment, or if we are already in low noise.

To Diable the node - Select IDLE. Verify it gets there.

If the node has determined that it needs help, it will enter the ASSISTANCE_REQ state and it will stay there until someone does the following:

To Re-enage from ASSISTANCE_REQ - Select INIT, then select your final desired state.

I had tried disabling H1_MANAGER while it was in RUNNING_ALIGNMENT by selecting IDLE, and also by selecting IDLE and then INIT, but neither worked in that situation. We had to pause H1_MANAGER to be able to get out of INITIAL_ALIGNMENT (was stuck at AQUIRE_SRY and kept forcing us into initial alignment).

Once we were sure that we had the detector moving up through the locking sequence well, we wanted to try re-enabling H1_MANAGER hopefully without it taking us back into RUNNING_ALIGNMENT, so while it was still Paused, we selected LOW_NOISE and then INIT, and then unpaused it and it went straight to LOW_NOISE and everything went well after that!

Got back to NOMINAL_LOW_NOISE at 2:25, and went back into Observing at 2:26. Damping violins for only 50 minutes!!!!

I wasn't able to find anything about what could have caused this lock loss, but I did notice something that I found interesting, although there might be a very obvious explanation for it.

Looking at the H1:ASC-AS_A_DC_NSUM_OUT16 and H1:SQZ-LO_SERVO_ERR_OUT_DQ channels (Attachment 1), the H1:SQZ-LO_SERVO_ERR_OUT_DQ channel first sees the lockloss only 22ms after it starts. This is still during the initial small change in slope of ASC-AS_A_DC that leads into the large slope and subsequent dropoff.

I've also attached the ISC_LOCK and SQZ_MANAGER logs from when the lockloss occurred. The last four locklosses that we have had (possibly more before that), SQZ_MANAGER has given us the 'SQZ ASC AS42 not on?? Please RESET_SQZ_ASC' message ~0.1 seconds after the lockloss started. At least looking at the ASC and LSC channels that come up in 'lockloss select', none seem to respond to a lock loss that quickly.

Nice alog Oli. If you use the H1:ASC-AS_A_DC_NSUM_OUT_DQ channel (sampled at 2kHz rather than 16Hz) and zoom in on the y-axis you can see that the AS_A channel actually sees a discreet bump and runs away before the SQZ_LO_SERVO shows it's glitch, see attached. The SQZ-LO is locked to the OMC TRANS 3MHz sideband so it probably a sign that the light at the OMC has changed.

The messages in the log about SQZ AS42 is because we have already lost lock but ISC_LOCK hasn't yet realized: see the first "refined time" plot in the lock loss tool where the ISC_LOCK_STATE_N shows the lockloss happened nearly 1 second after H1:ASC-AS_A_DC_NSUM_OUT_DQ sees it happen, is this longer than usual? We expect the SQZ AS42 not to be able to be on when we are unlocked, tagging SQZ.