STATE of H1: Observing at 148Mpc

Everything seems stable, we've been locked and Observing for 9 hours

STATE of H1: Observing at 148Mpc

Everything seems stable, we've been locked and Observing for 9 hours

TITLE: 07/21 Eve Shift: 23:00-07:00 UTC (16:00-00:00 PST), all times posted in UTC

STATE of H1: Observing at 144Mpc

CURRENT ENVIRONMENT:

SEI_ENV state: CALM

Wind: 9mph Gusts, 7mph 5min avg

Primary useism: 0.01 μm/s

Secondary useism: 0.04 μm/s

QUICK SUMMARY:

TITLE: 07/20 Eve Shift: 23:00-07:00 UTC (16:00-00:00 PST), all times posted in UTC

STATE of H1: Observing at 145Mpc

SHIFT SUMMARY:

- Started off the shift with a lockloss - cause unknown

- Reacquisition was automated, but we lost lock at TRANSITION FROM ETMX, EY/IX saturation

- Acquired NLN/OBSERVE @ 2:05

- I took a spectra of the 2nd harmonic violin modes - a current theory as to why the violins are ringing up is due to the 2nd order harmonics coupling into the first and fundamental modes, scope attached - Tagging SUS

- Note: The new H1 manager node we are testing automatically sets the requested state to be NLN, however due to the violin issue we have to wait in OMC WHITENING

- Leaving H1 to Ryan C. with the IFO locked and in observing for just under 5 hours

LOG:

| Start Time | System | Name | Location | Lazer_Haz | Task | Time End |

|---|---|---|---|---|---|---|

| 23:45 | VAC | Gerardo | EY | N | Check vacuum gauge | 00:12 |

Following a lockloss (cause unknown), we are back in Observing after successfully damping violins in OMC WHITENING. Systems seem stable, low ground motion/wind.

The SRC alignment step of initial alignment has been failing for the last 8 days and a for more days before that. The SR2 alignment step will run, then an operator will move SRM to increase ASC-AS_A peaks and make the AS air camera spot better. Even though these have all looked good recently, it hasn't been enough to get the trigger thresholds for SRCL high enough for it to lock. Operators have been skipping this step without problems, so alignment must be at least passable.

If this sounds familiar, it's because we have brought these thresholds up and down in the past (alog70013 has a good summary). I think the alignment of the entire IFO is changing so that we can't the SRCL input high enough depending on the alignment. Rather than us changing these thresholds every few months we could have some code lower the trigger thresholds if other conditions look good (ASC-AS_A for example). I'll try this out tomorrow to see if this will lock with our current alignment but also avoid the situation we had before this where we would get false locks and wildly swing the SRM.

Lockloss @ 23:25, unknown cause - not seeing much evidence of any ASC/LSC ringup.

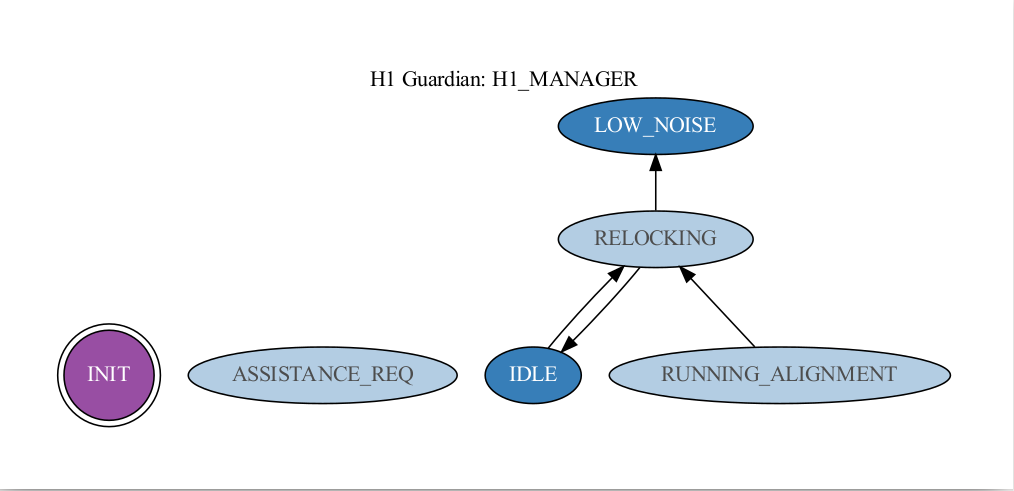

I'd like to start running the H1_MANAGER for the next few days in a testing phase. We can watch it and see what it does, then make tweaks as needed. The idea behind this node is to determine whether or not an initial alignment needs to be run and to flag for help when a human should intervene. Here's a quick rundown on how it works:

There are three main states that users will select: LOW_NOISE, IDLE, INIT.

To Engage the node - Select LOW_NOISE, then click INIT. It should determine if we are relocking, running an alignment, or if we are already in low noise.

To Diable the node - Select IDLE. Verify it gets there.

If the node has determined that it needs help, it will enter the ASSISTANCE_REQ state and it will stay there until someone does the following:

To Re-enage from ASSISTANCE_REQ - Select INIT, then select your final desired state.

I had tried disabling H1_MANAGER while it was in RUNNING_ALIGNMENT by selecting IDLE, and also by selecting IDLE and then INIT, but neither worked in that situation. We had to pause H1_MANAGER to be able to get out of INITIAL_ALIGNMENT (was stuck at AQUIRE_SRY and kept forcing us into initial alignment).

Once we were sure that we had the detector moving up through the locking sequence well, we wanted to try re-enabling H1_MANAGER hopefully without it taking us back into RUNNING_ALIGNMENT, so while it was still Paused, we selected LOW_NOISE and then INIT, and then unpaused it and it went straight to LOW_NOISE and everything went well after that!

Finally got around to looking more closely at the ITMX coil driver oscillations that caused a lockloss on July 9th . We have some channels that report the voltage and current used by each actuator, and looking at those time series it's pretty obvious that the ST2 H3 coil was the culprit. First attached plot are asds of the St2 current (top plot) and voltage (bottom plot) monitor channels. This was taken right after the ISI watchdog trip when the front end wasn't requesting any drive, but for some reason this particular coil was spewing a lot of noise. Both H3 current and voltage are many times the other 6 st2 coils. There was enough drive coming out of this one actuator that it was shaking St1 as well.

Second attached plot are time series for a st 1 actuator and the st2 h3 leading up to the trip. I think the St2 H3 actuator is yellow on both plots. About 40 seconds before the trip the voltage for the st2 h3 actuator starts behaving strange, gets worse until the ISI finally trips. The current for this actuator doesn't show this behavior until after the ISI trips, I don't really understand that.

We have an ECR to remove these DQ channels to try to free up some mb/s, and just keep some equivalent epics monitors. I don't think this presents an argument against removing them, the epics timeseries are just as clear as the full data channels in showing there was a problem. I would like to add the epics mons to our ISI coil driver overview screens, that would make finding this issue easier in the future. Could also think about a DIAG_MAIN test, or something, but only if this starts becoming a problem. I think we have seen this twice since aLIGO install started.

TITLE: 07/20 Day Shift: 15:00-23:00 UTC (08:00-16:00 PST), all times posted in UTC

STATE of H1: Observing at 146Mpc

CURRENT ENVIRONMENT:

SEI_ENV state: CALM

Wind: 7mph Gusts, 5mph 5min avg

Primary useism: 0.03 μm/s

Secondary useism: 0.04 μm/s

QUICK SUMMARY:

First thing in the mornig Livingston called to say their is disruptive logging preventing them from locking

LHO has taken a target of Opertunity to do some commishining.

Lockloss 18:14 UTC

https://alog.ligo-wa.caltech.edu/aLOG/index.php?callRep=71553

Relocking: Went really well with H1 Manager, Got up to OMC_Whitening without any intervention.

19:25 UTC Lockloss due to a Button Pusher, pushing a button.

https://alog.ligo-wa.caltech.edu/aLOG/index.php?callRep=71568

Lockloss again Likely due to poor alignment.

https://alog.ligo-wa.caltech.edu/aLOG/index.php?callRep=71568

Started an initial alignment

Got stuck in SRC align agian, Skipped it, by just taking ISC_LOCK to init NOMINAL_LOW_NOISE.

Once ISC_LOCK was moving up in states it didn't have any problems acheiving lock.

H1 Manager re-enabled

Made it back to OMC_WHITENING @ 21:35

NLN reached at 22:22UTC and Observing at 22:28

| Start Time | System | Name | Location | Lazer_Haz | Task | Time End |

|---|---|---|---|---|---|---|

| 13:46 | ISC | Ryan S | CR | - | MICHFF measurement | 13:55 |

| 14:40 | ISC | Gabriele | Remote | - | MICHFF filter change | 15:00 |

| 15:01 | FAC | Ken | OSB Rec | - | Lights (scissor lift) | 17:01 |

| 16:19 | PEM | Robert, & Crew | LVEA | N | PEM Work | 19:38 |

| 16:19 | Commish | Gabriele | Remote | N | Feed forward adjustment | 16:25 |

| 16:28 | FAC | Karen | Optics lab | N | Technical Cleaning | 16:58 |

| 17:06 | SQZ | vICKY | rEMOTE | n | SQZr work | 18:15 |

| 17:23 | FAC | Karen | Woodshop | N | Technical cleaning. | 17:33 |

| 18:27 | SEI | Jim | HAM8 | N | Looking for a location to mount new tools | 18:30 |

| 19:54 | FAC | Randy | Ey & EX | N | Mech Room Work | 21:36 |

| 20:46 | Ops | Ibrahim | VPW& Staging | N | Taking a \ | 21:46 |

| 21:02 | receiving | Christina | OSB Receiving | N | Rolling up the Roll up doors in the Receiving room. | 21:47 |

| 21:40 | Receiving | Christina | H2 building | N | Inventory | 22:40 |

| 21:47 | SRC | Gabriele | Remote | N | SRC FF Adjustments | 22:26 |

TITLE: 07/20 Eve Shift: 23:00-07:00 UTC (16:00-00:00 PST), all times posted in UTC

STATE of H1: Observing at 147Mpc

CURRENT ENVIRONMENT:

SEI_ENV state: CALM

Wind: 8mph Gusts, 5mph 5min avg

Primary useism: 0.02 μm/s

Secondary useism: 0.04 μm/s

QUICK SUMMARY:

- H1 has been locked and oberving for 0:30

- CDS/SEI/DMs ok

Following up on the discovery that the 1.34Hz peak in DARM was injected by the SRCLFF, I implemented a more aggressive high-pass with a 1.34 Hz notch in the SRCLFF path.

The new high-pass and notch completely remove the 1.34 Hz peak from DARM, and improves the DARM noise in the 1-2 Hz region. The reduction in RMS is visible also in the time trace of the DARM error signal.

As expected, the new high-pass introduces a bit of phase rotation at 10-20 Hz, and this limits the amount of subtraction we can get now with the SRCL FF. I also tried to retune the SRCL FF with a new measurement, but I could not improve the subtraction. The reason is that I need to take a new measurement of the SRCLFF to DARM path with the new high-pass and notch. I did not have time to do it today because violin modes were damped and we needed to go back to Observe. But it should be an easy fix (10 minutes measurement + retuning + 10 minues test)

However, looking at the coherence between DARM and SRCL, I don't see a lot of it, so maybe we're fine with this reduced level of SRCL subtraction.

The new SRCLFF combination to be used is FM1 FM2 FM3 (all together). ISC-LOCK has been updated and reloaded

H1:LSC-SRCFF1 SDF Changes were Accepted after speaking with Gabriele.

ScreenShot attatched.

Randy had pointed out a few months ago there was a section of piping coming out of the endstation HEPI pumps that should probably get some extra support. While the control room was relocking today, he went and added a bit of unistrut to the stand for the revervoir, attached phot shows how he attached to the foot of the reservoir stand. This was only needed for the end station pumps, the corner pumps have a much shorter run of pipe to the next support clamp.

Daniel, Camilla

Following on from Ryan's 71420, we looked at the movement of ITMY (only has ASC control) during DHARD_WFS and see there is now larger spikes during locking than before 29th, see attached plot where H1:SUS-ITMY_L2_OSEMINF_{U,L}{L,R}_INMON spikes during locking are larger after the t-cursor (see bottom purple plot for when violins rang up).

Zooming in, attached plot, we can see when the Input to the DHARD_Y filter is turned on that the output to DHARD_Y filter has a large transient lasting ~1second, not seen in DHARD_Y inmon. The only filter that is on during that time FM6 and although it has a larger step response, there is a 5s TRAMP so Daniel doesn't see an issue with this.

Nothing obvious happened to DHARD on the 29th, only alog around that date shows a change that was reverted 70928.

During relock on Tuesday we plan to increase this DHARD_Y ramp time (currently 5s) to see if we can reduce this transient that appears to have increased by a factor of 10 around June 29th. See attached plots of relock before and after the violins rang up.

There were some SDF Diffs (71670) for H1:ASC-DHARD_Y_TRAMP and H1:ASC-DHARD_P_TRAMP that I accepted, which bumped the ramp times up to 15 seconds.

As reported in alog71528, the ETM cameras were frozen last night, and the camera guardian went to WAIT FOR CAMERA state and came back in 1 min. This process was repeated three times as shown in the attached figure.

Looking back the guardian log, the ETMX camera (PIT2, YAW2) was frozen. I also looked at the VALID channel for ETMX/ETMY cameras mentioned in alog71460 (H1:VID-CAM25_VALID for ETMX, H1:VID-CAM27_VALID for ETMY). The VALID channels of ETMX/ETMY were 1 at that time, which means that the cameras were not actually frozen. So we probably can use this VALID channel to solve the short camera freeze issue for ETMX/ETMY. Unfortunately, the BS camera does not have the VALID channel since the ETMX/ETMY cameras are running on h1digivideo3, while the BS camera is running on h1digivideo2.

It may be that the VALID channel was frozen, but I'm not certain how likely that is or how to best tell. Maybe if there was a way to put a source of dither on the light the camera is seeing, but then that would interfere with the servo. The best solution might be to put the cameras that are used for servos on Beckhoff. The issue there is that it is not well suited for providing a remote camera image display. We could additionally add a keep alive signal that alternates between 0 and 1.

We looked at some filter cavity detunings and slightly varied the generated squeezing level, to see if we could at all change the noise around 70-100 Hz. It's not entirely clear what's going on, no huge obvious changes, but perhaps subtle ones. At least, even at low frequencies < 100 Hz, it seems like higher NLG is slightly better, and that moving the FC detuning to -25 Hz is not obviously worse. To start, I just tried moving FC detuning up/down 5 Hz, and rotating sqz angle to see if anything was better, see the trends of the moves here.

From the screenshot, red + cyan are from hot om2 last time on 6/28. Other traces are from the recent heating of OM2; the recent no-sqz seems to have other injections going on at the same time. There may be a slight difference between brown FDS (before moving anything), the yellow FDS (moving FC detuning from -30 Hz to -25 Hz, and slightly increasing NLG), and the dark blue FDS (less NLG, same FC - 25Hz detuning, different sqz angle apparently).

For now, I have left the squeezer in the "yellow" configuration from above (previously we were in "brown" configuration). Differences are 90uW OPO trans (compared to 85uW), and filter cavity detuning at -25 Hz (instead of -30 Hz). Squeeze angle is the same, 145 deg. In SDF, I've accepted the FC detuning change, and OPO temp change. I think the difference might be marginal at best, but interested how it goes when IFO is thermalized. Screenshot showing ~2 hours into lock in the black trace here, looks similar to the yellow.

First Lockloss of the Day: 18:14 UTC

Lockloss tool failed on analyze of this LockLoss.

https://ldas-jobs.ligo-wa.caltech.edu/~lockloss/index.cgi?event=1373912078

Happened while the PEM crew were potentially working around HAM2's sensitive areas. Possibly could have been them.

Screen shot of lockloss select's ndscopes attached.

The second Lockloss: 19:25 UTC Lock loss was certainly due to a button being pressed that then caused a lockloss.

https://ldas-jobs.ligo-wa.caltech.edu/~lockloss/index.cgi?event=1373916371

"When I set H1:LSC-MICHFF_GAIN back to 1 from zero, we lost lock

This is because I had FM7 and FM6 on in that filter bank, when I should only have had FM7."

~The button pusher.

The third lockloss was immediately afterwards: Was from OFFLOAD_DRMI and almost certainly cause by poor alignment.

https://ldas-jobs.ligo-wa.caltech.edu/~lockloss/index.cgi?event=1373916371

Derek noticed (via the summary pages) that there seems to potentially be an occasional discrepancy between CALIB_STRAIN_NOLINES and CALIB_STRAIN_CLEAN.

The NonSENS subtraction should be off. The first attachment shows that indeed the noise estimate output by the front end NonSENS is zeros to the 1e-27 level (these are in the same strain units as GDS-CALIB, and I just didn't bother scrolling in more to see how 'zero' I can make the y-axis) during a time when the discrepancies are visible on the summary page.

A next step is likely to try to zoom in on the time axis of the spectrograms from the summary page to see when these things are happening, and see what other things might be changing around those times (eg, are those the beginning of Observe segments or going in/out of NLN_CAL_MEAS?). Since the output of the noise estimate from the front end is zeros, _NOLINES and _CLEAN *should* be identially the same.

I investigated this issue further by looking at the data for the NOLINES and CLEAN strain channel between 6 and 8 UTC. This time period contained multiple times where the summary pages indicated that NOLINES and CLEAN were not identical (see attached plot).

When directly comparing the data for these channels saved in the H1_HOFT_C00 frames, I find no differences between the two channels. The difference noted on the summary pages appears to be a summary page issue/bug, and not an issue with the strain data.

The earliest day these anamolous features appeared on the either the L1 or H1 summary pages is June 5, which is the same day as a summary page update that included changes to the strain channels.

Lockloss after 46h25 in NLN. 1372737707.

No obvious cause, DCPD saturation tag. DARM was the first loop to change attached plot.

NOISE_CLEAN reloaded as requested in 71124.

Back to NLN and Observing at 06:24UTC.

This lockloss has again rung up the violins again, 71063. 1h25m in OMC_WHITENING. ITMY was slowest to damp.

The last lock we had with low violins was the 42hr lock after Tuesday maintenance, 06/27 21UTC to 06/2915UTC. Search for things that changed during that time:

All the modes (both 500Hz and kHz) have been damping down nicely in the last 6hrs of so (EY20 is still higher than expected and we don't have setting for it - have tried finding one few times but it needs more effort).

Daniel and Sheila note that the H1:FEC-8_ADC_OVERFLOW_0_{12,13} are from before the ADC was updated so the real OMC channels are not overflowing, this is just a relic and can be used to see OMC_DPDC being closer to saturating level, could equally see using H1:OMC-DCPD_{A,B}_WINDOW_{MIN,MAX}.

We checked that OMC gain settings were not changed on the 29th June.