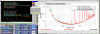

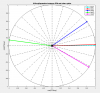

N. Aritomi, J. Kissel, C. Compton, T. Shaffer As we were poking around for ideas as to why violin modes are now constantly getting rung up, we found that the lock-loss triggers were never installed in any of the parametric instability damping control models. TJ's doing some trending in parallel to me writing, and I hear him verbally convincing himself via trends that this isn't the source of violin mode ring ups; I guess he'll write a comment, or something to share his convincement. For those who haven't heard of these "lock loss triggers" -- they're fast-front-end, 16 kHz speed triggers that shut off all global control to the suspensions to ensure that -- upon lockloss -- no garbage ISC control signals (including violin mode damping) are sent to the test masses -- which have historical been a source of violin mode ring up. Regardless, of whether TJ's convinced that this isn't our problem *today,* I still think this is an oversight in the design that should be rectified. I've opened a work-permit this this to be done in one of the upcoming maintenance days -- WP:11322. Shown are the recommended places where the lock-loss tool should interrupt the signals in - h1susetmypi.mdl (and h1susetmxpi.mdl should be modified in the same way) and - h1susitmpi.mdl

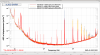

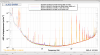

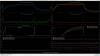

I looked at the PI outputs monitor channels (H1:SUS-PI_PROC_COMPUTE_MODE24_DAMP_OUTPUT) and their IOP DAC channel (H1:FEC-97_DAC_OUTPUT_4_6) since these signals get sent straight to the DAC. The main times I looked at are the last lock loss we had before the violins run run up on June 29th, and the relocking up to noticing the high violins. I found no difference in the output of these signals during this lock loss compared to previous lock losses, or during the subsequent acquisition.