The TRANSITION_BACK_TO_ETMX state of the SUS_CHARGE guardian has been causing the majority of our locklosses prior to Tuesday Maintenance, e.g. 69437, 70861.

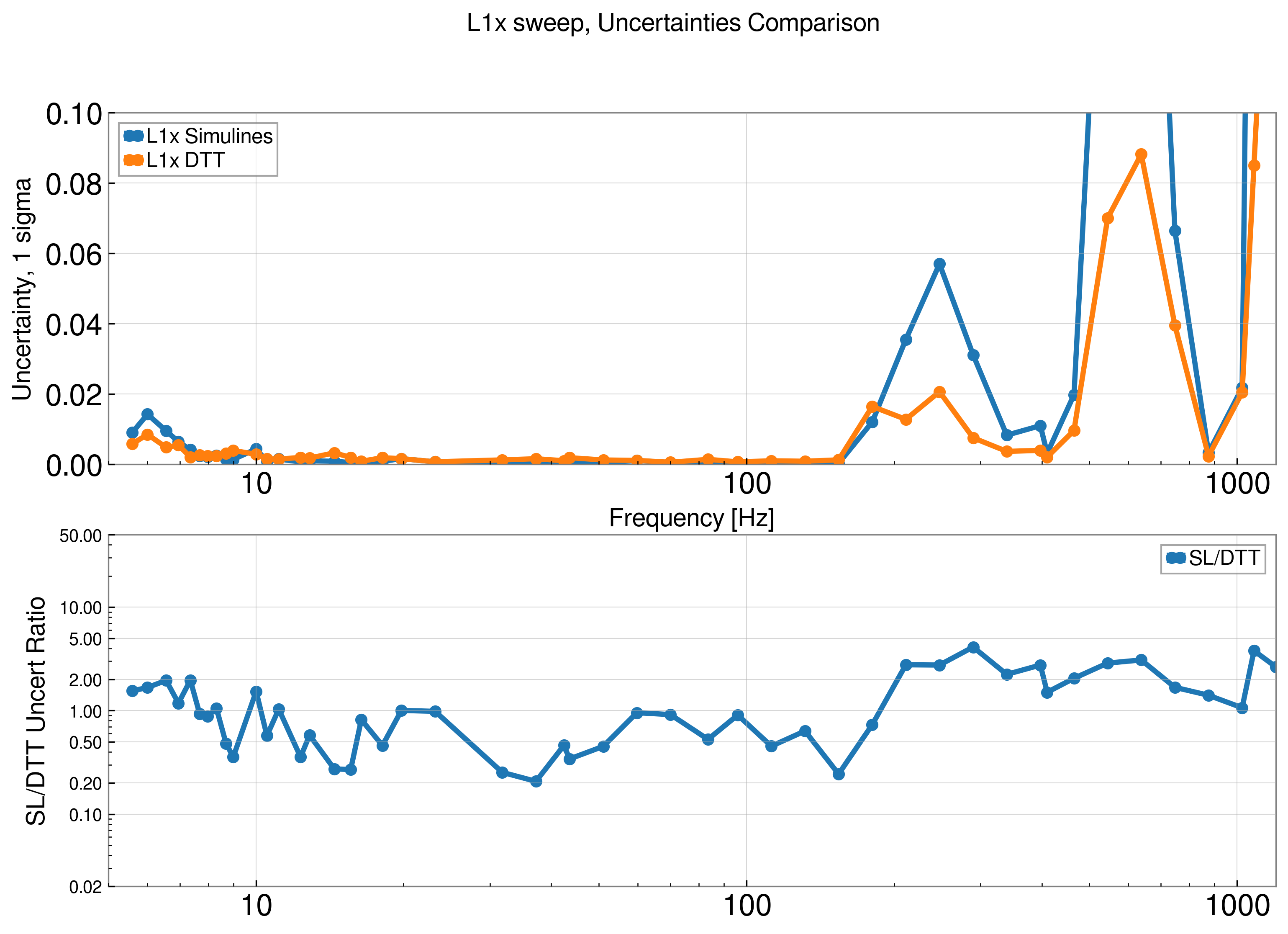

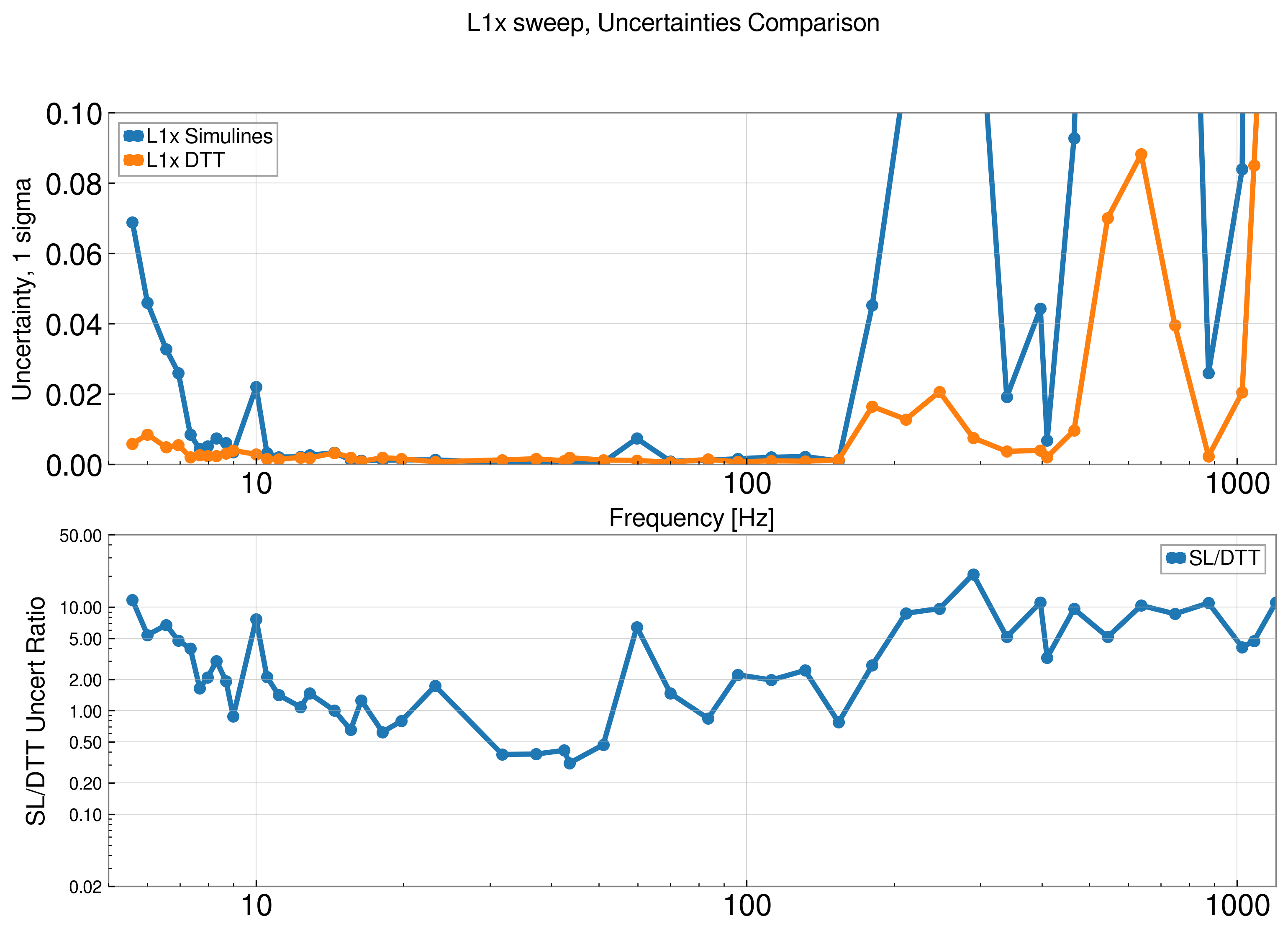

I looked into this again and found that the ITMX LOCK_L gain is being turned off before the ITMX to ETMX transition has completed. The ramp time of the swap is 20 seconds but the code only waits 15 seconds before moving on, see attached plot and code.

We are using a ezca.get_LIGOFilter and the wait=True parameter isn't waiting the full ramp_time specified, created Issue 15. I've changed the wait to be False and added a 20s sleep timer. The SUS_CHARGE guardian will need to be reloaded when we are out of observing.

Once I remembered that SUS_CHARGE is not one of the guardian nodes monitored for the observation intent bit (although its subordinate nodes are), I reloaded it this morning at 14:02 UTC so Camilla's changes are pushed in.

This fixed the SUS_CHARGE code and we successfully stayed locked throughout both ESD transitions on Tuesday.

Total time taken is 18 minutes, this is longer than the 15 minute target. I've reduced the slowy_ramp_on_bias() ramptime back from 60 to 20 seconds, as I've reverted some of 68987 changes I was blaming for the looklosses. ESD_EXC_{QUAD} will need to be reloaded for each quad for this to take effect.

Currently the SUS_CHARGE code is taking 16m45s. We could look at further decreasing some tramps.

TJ noticed this morning that the L2L tramps for ITMX and ETMX weren't reverted by the code. I've added lines to SUS_CHARGE to save and revert these after the ESD transitions and also save adn reset the ITMX_L2L gain so it's not hard-coded.