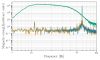

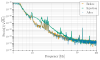

Attached is a cross correlation plot for 20 minutes of no sqz time taken June 21st (70668) after first reducing the input power to 60W (366kW circulating power), this was before the LSC feedforward was retuned improving the sensitivity below 50Hz. The first plot is in loop corrected mA, you can compare the correlated noise estimate by the cross correlation and by subtracting the shot noise. At high frequencies the shot noise subtraction doesn't work well, I believe this is due to imperfections in how the DCPD sum mA channel is calibrated into actual mA. At low frequencies the cross correlation is overestimating the DCPD sum PSD, this isn't due to an error in the OLG measurement. See alog 70453 for some information about checks of the cross correlation and this low frequency problem. In mid frequencies the two methods seem to agree. The second attachment is the same data calibrated into displacement, with a model of quantum raditation pressure noise included and subtracted from the two estimates. For this time the DARM OLG model from pyDARM was underestimating the OLG by 2% at 24Hz, so I've scaled it up by 2%.

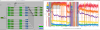

The next set of attachments are the same two plots made for 2 hour of no sqz time from June 4th when the HAM7 ISI had a problem (70117), in DCPD mA and in displacement . We don't have a DARM OLG measurement from this time, so we are just using the pyDARM model without any scaling.

Evan H pointed out that the calibration into displacement for the plots above were incorrect. This is because I used pyDARM to get the calibration from DARM err to displacement, but forgot to update my hardcoded scalar to translate mA to DARM err. I also changed the ini file that is pointed to, for 75W I'm using '/ligo/home/jeffrey.kissel/2023-05-10/20230506T182203Z_pydarm_H1.ini' for times after June 22nd (60W) I'm using '/ligo/groups/cal/H1/reports/20230621T211522Z/pydarm_H1_site.ini' When I use pydarm to calibrate mA into meters, I am not applying corrections for the kappas here, which GDS does. In all the attached calibrated plots, GDS_STRAIN shows higher noise from 60-90 Hz, which might be consitent with the fairly large GDS calibration error which has been mostly consistent throughout these configuration changes (see 70907 and 70705)

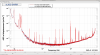

We also took another set of cross correlation data for 1 hour with the hot om2 on Wed, 70930, those plots are attached here as the last two attachments. It is interesting to open all three of these plots in a browser and look back and forth between them. The most obvious change is the jitter peaks, and the improvement at low frequency from the power reduction. But there also seems to be a broad change in noise from 60-100Hz, which should probably be confirmed by looking at other times and double checking the calibrations.