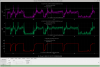

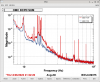

I think the recent issues with squeezer pump ISS railing are likely resolved after today's on-table realignments. The green pump AOM was significantly clipping the beam (SQZT0 layout here, "GAOM1"), both reducing the total power after the AOM, and degrading the fiber coupling efficiency due to the clipped+bad mode shape. See GAOM1 sweeps from this morning (before aligning, left) and now (after, right). Along the pump path, we now have better GAOM1 throughput (~90%), comparable diffraction efficiency (~60%), and improved fiber coupling. For 60uW opo trans we've been using, we previously launched 22mW with no buffer (hence iss railed); now we only have to launch 15mW for the same opo transmitted power, with lots of buffers.

GAOM1 typically has ~90% throughput, e.g. previously this was 14.4mW out from 16mW incident. Today I first measured 25mW out for 44mW incident (~57%): this means >30% of power from SHG was clipping on the AOM apeture. After re-aligning GAOM1 using the mount screws, we're back up to 40mW out for 45mW incident, so almost 90% throughput again. I think this could be better, but good enough for now.

Relieving the clipping also significantly improved fiber coupling efficiency, presumably b/c it improves the mode shape, as we are using the 0-th order AOM mode for pump light, and not the nice diffracted order that gets cleaned up in the diffraction. So aom clipping shows up on the beam we are trying to fiber couple.

-- Before, for 20mW into the pump fiber, we had 1.8mW on OPO_REFL_DC_POWER on the other end, with GAOM1 driven at 2-3V (almost no room), and all power on that path going into the fiber (SHG_REJECTED = 0mW).

-- After, for 20mW into the pump fiber, we have 2.9mW on OPO_REFL_DC_POWER (60% higher power), with GAOM1 driven at 4.5V (mid-range), and a healthy buffer of rejected power on the fiber path (SHG_REJECTED = 8mW).