Naoki, Vicky

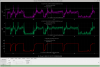

To try damping the 80 kHz PI recently causing locklosses (LHO:70434), today we installed a new path on PI28, with drive sent to ETMX. To phase-lock the ESD damping drive to the PI mode, we bandpassed the DCPD signal around 80.296 kHz (foton here). This frequency was chosen based on the DCPDs full-spectrum signal around 80.3 kHz, which today we saw the 3 peaks in red here between 80.295-80.301 kHz in full lock. It seems like our problem is the bigger peak around *296, where pink cursors are centered. We are trying to damp on ETMX first, since it seems like PI29 80kHz damping on ETMX could impact this mode.

The new path for PI28 has been updated and damping is guardianized, but this PI28 damping is untested, and there are no verbal alarms for PI28 yet.

Summary with the current status of PI damping:

| PI frequency |

PI damping mode number |

Test Mass |

PI Guardian status |

_DAMP_GAIN |

| 10.428 kHz |

24 |

ETMY |

automated |

1000 |

| 10.431 kHz |

31 |

ETMY |

automated |

1000 |

|

80.302 kHz (LHO:68760)

|

29 |

ETMX |

automated, likely working

(LHO:70243) |

50000

(LHO:69800)

|

|

80.296 kHz (LHO:70443)

|

28 |

ETMX |

guardianized, testing now |

50000

|

SDFs were recoinciled after, see screenshots -- mainly, we un-monitored guardian-controlled things like the damping phase and PLL integrator.