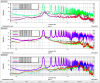

Last week Elenna and Derek pointed out there were some evidence of PRCL ringing up around 11 Hz around locklosses due to not having the appropriate unity gain frequency (alog 70044).

Since that report was made I looked into the following locklosses that were at the guardian state of 600 NLN.

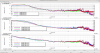

Some of the PRCL plots showed a longer or shorter oscillation durations before lockloss, examples are shown in the images attached. Some of the PRCL plots did not show any evident oscillations.

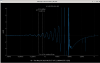

Lockloss, 600 NLN, at 05/30/23 00:55:57 UTC, PRCL issue shown around 12 Hz (shown in first image, this was one of the longer oscillations)

Lockloss, 600 NLN, at 05/30/23 08:30:27 UTC, PRCL issue shown around 12.6 Hz (short oscillation)

Lockloss, 600 NLN, at 05/30/23 12:30:27 UTC, PRCL issue not shown

Lockloss, 600 NLN, at 05/30/23 14:59:29 UTC, PRCL issue not shown

Lockloss, 600 NLN, at 05/31/23 01:09:14 UTC, PRCL around 10 Hz (short oscillation)

Lockloss, 600 NLN, at 05/31/23 08:10:26 UTC, PRCL issue not shown

Lockloss, 600 NLN, at 05/31/23 12:13:26 UTC, PRCL issue shown around 11 Hz (short oscillation)

Lockloss, 600 NLN, at 05/31/23 18:03:16 UTC, PRCL issue shown around 11 Hz (short oscillation) (last lock at 76 W input, Elenna lowered it by 1 W (alog 70042) after this)

Lockloss, 600 NLN, at 06/01/23 01:19:29 UTC, PRCL issue not shown

Lockloss, 600 NLN, at 06/01/23 05:20:18 UTC, PRCL issue not shown

Lockloss, 600 NLN, at 06/01/23 08:41:49 UTC, PRCL issue not shown

Lockloss, 600 NLN, at 06/01/23 12:44:30 UTC, PRCL issue not shown

Lockloss, 600 NLN, at 06/02/23 06:57:23 UTC, PRCL issue not shown

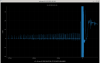

Lockloss, 600 NLN, at 06/02/23 08:57:58 UTC, not sure what to make of the glitches shown…but could be interesting to look into (shown in second image)

Lockloss, 600 NLN, at 06/02/23 20:13:27 UTC, PRCL issue not shown, (I believe this was due to parametric instability in mirrors)

Lockloss, 600 NLN, at 06/02/23 04:23:53 UTC, PRCL issue not shown

Lockloss, 600 NLN, at 06/03/23 08:32:59 UTC, PRCL issue shown around 11 Hz (short oscillation)

Lockloss, 600 NLN, at 06/03/23 12:06:23 UTC, PRCL issue not shown

Lockloss, 600 NLN, at 06/03/23 13:56:36 UTC, PRCL issue not shown

Lockloss, 600 NLN, at 06/04/23 20:54:10 UTC, PRCL issue not shown

Lockloss, 600 NLN, at 06/05/23 01:44:44 UTC, PRCL issue shown around 11 Hz (short oscillation)

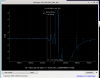

Lockloss, 600 NLN, at 06/05/23 11:16:09 UTC, PRCL issue shown around 11 Hz (shown in third image, this is an example of what the short oscillation looks like)

Lockloss, 600 NLN, at 06/05/23 18:17:00 UTC, PRCL issue shown around 13 Hz (longer oscillation)

Since we still see the PRCL issue after the power change, we will look into what the arm circulating power was for the last 6 locks to see if there is any pattern.