TITLE: 05/24 Owl Shift: 07:00-15:00 UTC (00:00-08:00 PST), all times posted in UTC

STATE of H1: Lock Acquisition

SHIFT SUMMARY:

Lock#1

Couldn't lock PRMI, lockloss

Lock#2

Went right into an initial alignement, OM1 & OM2 were saturated during PRC in initial alignment

DRMI's lock didn't seem great on the buildups but the spot looked ok

OMC locked first try on its own

NLN @ 08:44, 30 ASC SDF diffs but they were all for the camera servos, I waited for ADS to converge for the camera servos to turn on (~ 16mins) which cleared these diffs

Observing mode @ 09:02 while we thermalize

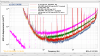

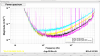

Out of observing at 11:05UTC for a new FF filter test/measurement and then a calibration suite, new FF filter applied at 11:07UTC

I used Coreys template (/ligo/home/corey.gray/Templates/dtt/DARM_05232023.xml) which I had to enable "read data from tape" for it to run. Measurement started at 11:10UTC, finished at 11:12UTC. My DTT session then immediately glitched and crashed before I could save it, great... I restarted the measurement at 11:15UTC, finished at 11:16UTC. It wouldn't let me save it (error: Unable to open output file), but I added it as ref 27 on the previously mentioned xml file in Coreys directory but this might have not saved from that issue. I grabbed a screenshot of it.

I switched back to the old filters to take the calibration suite at 11:21UTC, I wasn't sure if we wanted them on or not for this, apologies if we did want them on.

Lockloss @ 11:25, possibly from PI29, but on NUC25s scope guardian appeared to be successfully damping it? It was tapering down when when the DCPDs saturated and we lost lock, it also coincided with a ground motion spike from that 5.5 from NZ. I then stepped down the EX ring heaters to 1.2 using the console commands Sheila provided in her alog.

Lock#3:

Yarms power was drastically lower after the lockloss and looked clipped on the camera, increase flashes ran twice and wasn't able to get it above 50%, I stepped in and still wasn't able to even get it to 80%. I gave guardian another shot after this and increase flashes ran another 2 times and was not able to get it, I tried again to lock it and was unsuccesful. Lockloss at LOCKING_ALS after some more rounds of adjusting and trying to lock.

Lock#4-11

ALS locklosses

Lock#12

Beatnotes aren't great, -19 & -20, I'm starting another initial alignment. Lots of SRM saturations during SRC align, trending SRMs OSEMs there doesn't appear to be any unusual motion in the past 20 hours.

After a suggestion from Betsy and Jenne, I check PR3 and it seem to have drifted a bit, I moved PR3 in yaw 0.8 microradians negatively which increased the COMM beatnote. I was able to lock ALS after this but got no flashes on PRMI so I started another initial alignment but it didn't do the SRC align correctly again, TJs going back to try and fix this.

Handing off to TJ

LOG:

| Start Time |

System |

Name |

Location |

Lazer_Haz |

Task |

Time End |

| 14:23 |

FAC |

Betsy |

FCES |

N |

Closeout checks |

14:38 |

| 14:56 |

EE |

Ken |

Carpenter shop |

N |

|

15:56 |