Jennie W., Ryan S., Evan H.

We tested the new filters Elenna & Gabriele designed in this alog. Previous effeorts to design these recently are in this alog.

These were previously tested by Ryan C. here.

Executive Summary

The aim was to do a test > 6 hours in as the filters were optimised for a thermalised IFO.

The new FF filters make DARM sensitivity worse.

SRCLFF coupling to DARM looks better with the new filters between 12 and 56Hz roughly.

MICHFF coupling to DARM looks worse below 100Hz with new filters.

Tuning the gains of MICHFF up and down made the coupling worse.

Test 1

We took a background measurement at 15:32:23 UTC.

This was with the old filters for MICHFF and SRCLFF1 - labelled as 4-23-23 in filter banks.

Ended before 15\;33\;39 UTC.

SRCLFF1 gain = 1, MICHFF gain = 1

Ref 0 - CAL-DELTAL_EXTERNAL_DQ Power Spectrum

Ref 1 LSC-MICH_OUT_DQ/CAL-DELTAL_EXTERNAL_DQ Coherence

Ref 2 LSC-SRCL_OUT_DQ/ CAL-DELTAL_EXTERNAL_DQ Coherence

Test 2

Went out of OBS, chnaged the ramp time of both banks to 5s. Turned gain to 0. Turned off 4-23-23, turned on 5-20-23. Turned gain back to 1.

We did the MICH filter first, it was turned on by 15:37:50 UTC.

Then the SRCL filter by 15:38: 29 UTC.

Both banks have a gain 1 nominally.

SRCLFF1 gain = 1, MICHFF gain = 1

Measurement start = 15:38:56 UTC

Measurement end = 15:40:00 UTC

Ref 3 CAL-DELTAL_EXTERNAL_DQ Power Spectrum

Ref 4 LSC-MICH_OUT_DQ/CAL-DELTAL_EXTERNAL_DQ Coherence

Ref 5 LSC-SRCL_OUT_DQ/ CAL-DELTAL_EXTERNAL_DQ Coherence

Test 3

For the next test I misread the data, decided SCRL was worse with the new filter and so tuned the gain a bit.

SCRLFF1 gain = 1.1, MICHFF gain = 1

Measurement start = 15:42:59 UTC

Measurement end = 15:44:10 UTC

Ref 6 CAL-DELTAL_EXTERNAL_DQ Power Spectrum

Ref 7 LSC-SRCL_OUT_DQ/ CAL-DELTAL_EXTERNAL_DQ Coherence

Afterwards I realised that SRCL was better with the new FF filters, so we put the SRCLFF gain back to one at 15:49:39 UTC and took some measurements while tuning the MICH gain which is broadly worse with the new filters.

Test 4

MICHFF gain changed by 15:51:06 UTC

SCRLFF1 gain = 1, MICHFF gain = 0.9

Measurement start = 15:51:58 UTC

Measurement end = 15:53:15 UTC

Ref 8 CAL-DELTAL_EXTERNAL_DQ Power Spectrum

Ref 9 LSC-MICH_OUT_DQ/CAL-DELTAL_EXTERNAL_DQ Coherence

Test 5

SCRLFF1 gain = 1, MICHFF gain = 1.1

Ref 10 CAL-DELTAL_EXTERNAL_DQ Power Spectrum

Ref 11 LSC-MICH_OUT_DQ/CAL-DELTAL_EXTERNAL_DQ Coherence

Template saved in /ligo/home/ryan.short/FF_testing.xml

Old filters back in by 16:00:50 UTC

My version - corresponding to attached plots is saved in jennifer.wright/git/Feedfoward/2023-05-29_DARM_FOM_LSC_FF_check.xml.

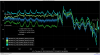

Red measurement is the old FF filters, blue is the new with a gain of 1, yellow is with a higher gain in SRCLFF1, purple is with a lower gain in MICHFF and green is with a higher gain in MICHFF.

First image is DARM ASD, SCRL coupling, MICH coupling, second shows DARM ASD for all measurements, thirs shows DARM ASD for old filters vs. new filters with no gain changes.