S. Dwyer, J. Kissel, E. v. Ries, T. Shaffer, R. Short

Information is rolling in fast, and there's five folks working on diagnosing / solving the issues, but think we've both identified and temporarily solved a major issue with the HAM-ISIs slowly driving away from their target alignment value with a slllooooowwww time-scale of weeks, resetting to their desired alignment position only after front-end model restarts.

This aLOG picks up where Sheila left off with POP WFS falling of the ISCT1 diodes (LHO:69930), and add more to the investigation of the HAM ISI issues started with LHO:69924.

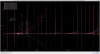

The most incriminating plot is the first attachment, 2023-05-26_HAMISI_RZ_Residual_sinceNov2023.png. This shows the "RZ residual" for all HAM ISIs, a comparison between the capacitive position sensor (CPS) live RZ position (composed of each tables three horizontal CPS) against a digitally fixed "target" value. The units of the y axis are in nanoradians, so when you see drifts on the scale of 30000 nrad, that's 30 urad.

Most recently, for example, due to opposing signs of drift, HAM2 and HAM3 have drifted apart by 10 urad, which we think have been causing all the ALS issues that we've been trying to solve with PR3 alignment and ISCT1 table alignments.

Here's what we know (and things we've ruled out):

- All HAM ISIs except HAM8 -- HAM2, HAM3, HAM4, HAM5, HAM6, and HAM7 -- show this slow accumulation of drift in RZ.

- the degree to which they drift is not consistent between chambers. Some chambers as large as 30 urad, some as small as 0.5 urad before getting its drift reset.

- the sign of the drift (either +RZ or -RZ) is inconsistent between chambers, but for a given chamber is consistent between drift resets.

- HAM2, HAM5, HAM6, and HAM7 consistently drift in +RZ, HAM3, HAM4 consistently drift in -RZ.

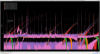

- On a linear y-scale, by eye, the drift looks "exponential," but it's not logarithmic with base 10. See 2023-05-26_HAMISI_RZ_Residual_sinceNov2023_logyaxis.png to see the same drift, by with a log-base-10 y axis.

- the rate of accumulation appears inconsistent between chambers and non-linear, but for verbal approximation for all chambers the rate could be generalized as 0.5 urad/week or 5 urad/month.

- We have confirmed (as best we can) with SUS top-mass alignment slider drive and optical levers that this is a real in the RZ residual causes a real drift in table alignment

- The story is *always* muddy with these SUS alignment sliders and optical levers over the course of months, but we convinced ourselves with front-end model restarts that a model restart restores the yaw alignment of the table.

- The story of "when did this start?" is muddied by chamber vents, but we believe, prior to ~Nov 2023, we did NOT see any of this drift.

- We DID NOT see this in O3A or O3B in H1

- On this kind of time-scale of consistent accumulation of drift, and the fact that

- we don't see this at L1,

- HAM8 is not synchronized and it's the only one not drifting

the first thing we point our fingers toward is the CPS timing (L1 has *not* synchronized their CPS) thinking that it's a slow-de-synchronization of the timing system.

We're convinced it's NOT the CPS timing. Here's why.

- We know the problem is ONLY with the H1 HAM ISIs in Horizontal CPS, most easily seen in the RZ DOF

- We DO NOT see this in any BSC-ISIs

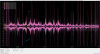

- We DO NOT see any drifts in these HAM-ISI vertical residuals. (see Third Attachment)

- We DO NOT see any drifts in a quick recent trend of L1's HAM-ISIs RZ sensors.

- We know that the analog arrangement of the timing synchronization has grown organically enough that there's *nothing* in common across all 6 corner station / LVEA HAM2-7 ISIs.

- The BSC and HAM ISIs are synchronized with two separate CPS fan out chassis. (OK, that's the one thing that the HAMs have in common)

- However each HAM ISI's six CPS readouts are grouped into two analog satellite amplifiers (crates, racks) each which have 4 channels.

- The sensors are grouped in pairs of horizontal and vertical, i.e. "H1V1" for corner 1's horz. and vert sensors, so verticals are synchronized

in the same way as horizontals, and they're spread differently across the 8 channels available

- Here's the organic layout:

- HAMs 2, 3, 7, and 8's channels are grouped (H1V1 H3V3) and (H2V2 xxxx)

- HAMs 4, 5, and 6's channels are grouped (H1V1 H2V2) and (H3V3 xxxx)

- HAMs 2, 3, 4 ,5, and 6 are the older style shielded coax cabling systems

- HAMs 7 and 8 are the newer triax cabling system

- HAMs 7 and 8 verticals are FINE sensors, rather than COARSE sensors

- again HAM 8 is NOT synchronized

- An odd additional symptom if this drift is that the high-frequency noise floor of the sensor is increasing. However, this is *also* consistent with the gaps between the horizontal sensors increasing because of the RZ drift.

- The drift resets when the front-end model is restarted:

- The times that the drifts reset are:

May 23 2023 15:40 UTC HAM6 reset [h1seiham16 computer restart LHO:69843]

May 10 2023 20:27 UTC HAM45 reset [partial corner station dolphin crash 69492]

Apr 11 2023 15:09 UTC ALL HAMs reset [RCG upgrade LHO:68595]

Feb 14 2023 18:00 UTC [RCG upgrade LHO:67411]

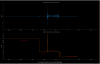

- Seeing this, we asked Erik to restart the HAMs 2-7 front-end models. The best "out of loop" metric we have for this is the optical lever position for PR3 and SR3.

- pr3_oplev_ham2_restart.png PR3 position during the HAM2 reboot reports muddy information. Jim turned off the ISI HAM2 RZ loop alone (which take the CPS position drift out of the feedback), and we see the optical lever change. Then we turned off all the isolation loops and saw no change in position. Then we restarted the model and ... it came back to a DIFFERENT non-drift, nor non-isolated position. Also, Sheila has reason to believe that the optical lever calibration gain is off by as much as a factor of 10, so 1 urad reported by the optical lever is actually 10 urads of real motion. #facepalm #muddywaters

- sr3_oplev_ham5_restart.png SR3 position didn't change that much after the restart because the drift wasn't that large to begin with #facepalm #muddywaters

- The real proof that this reset worked came after all today's HAM2-7 model restarts which reset the table alignments, and then restoring the PR3 and SR3 alignment sliders to a value when the table alignment had all the tables had just been reset (Apr 13 2023 03:00 UTC) -- because we immediately recovered all signals on ISCT1.

And now, we're now almost to NOMINAL_LOW_NOISE, and we think we've temporarily solved the issue.

So, it's on Jim to investigate why these RZ CPS are causing a drift.

Given that it's not the timing system, now our blame is solely on to things digital.

He's got a few weeks before the drift gets bad again... Stay tuned.