Related alog: https://alog.ligo-wa.caltech.edu/aLOG/index.php?callRep=18269

Summary:

During long lock stretches where POPAIR_B_RF90 slowly drifts up, SR3 pitches up, and this seems to be a real PIT motion, not some bogus signal, even though SR3 is not touched by ASC nor LSC.

Turning SR3 back to the original angle when in lock doesn't restore POP90 (see the above alog by Evan), so this PIT is not the cause of POP90 drift, but rather the result of something else affecting both SR3 and POP90.

The time constant of SR3 drifting in-lock is much slower than the time constant of SR3 getting back after lock loss as was pointed out by Evan. The former looks as if it could be the mirror heating, and the latter could be the wire cooling.

The power buildup in the SRC itself is about 120mW or so according to Kiwamu and probably is not enough to cause wire heating by some scattered light.

Based on these, one possibility that Stefan came up with is that the heating of the ITMs changes the amount of higher order mode spilling over on the SR3 wires, causing PIT. Since wire heating is much quicker than the mirror, the time constant is equial to that of the mirror. Once the lock is lost, the power is lost immediately, and the cooling is determined by the wire alone.

We could play with TCS during a long lock to see how POP90 responds, and/or scan OMC to see the mode change during a long RF lock.

Details:

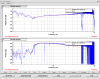

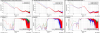

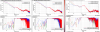

The two plots show the same set of signals. The first one is from last night and the second one is when Evan changed SR3 PIT manually while in lock.

If you look at POP90 (ch2), SR3 OPLEV PIT (Ch6) and SR3 M3 witness BOSEM PIT signal (ch9) in the first plot, they are almost perfectly correlated, and in this case SR3 moved by 0.7 urad. The fact that the motion is seen by oplev and bosem suggests that this is real. Also, if the oplev signal is caused by e.g. some scattered light caught by oplev, the oplev sum (ch.8) should change at least 2% over the lock stretch, but in reality it changed by less than 0.2%.

SR2 PIT (Ch. 13) and SRM YAW (Ch.12) are also coherent with POP90.

SR2 PIT should be the result of ASC feeding back to SR2. In the second plot, when Evan turned SR3 back, SR2 also moved back.

SRM YAW might be the similar effect as SR3.