TITLE: 12/06 Owl Shift: 08:00-16:00 UTC (00:00-08:00 PST), all times posted in UTC

STATE of H1: Observing at 59.5354Mpc

INCOMING OPERATOR: Jeff

SHIFT SUMMARY: Aside from one lockloss that I can't explain, we have been locked the whole shift. The range fell starting around 12:30 and has come up a bit, but not fully. I have ran the DARM_a2l_passive.xml every once in a while and it came out with different results almost every time. So I wasn't sure if I should run it in the beginning of the shift, but I ran it after LLO dropped out and the range wasn't recovering. Not sure if it helped.

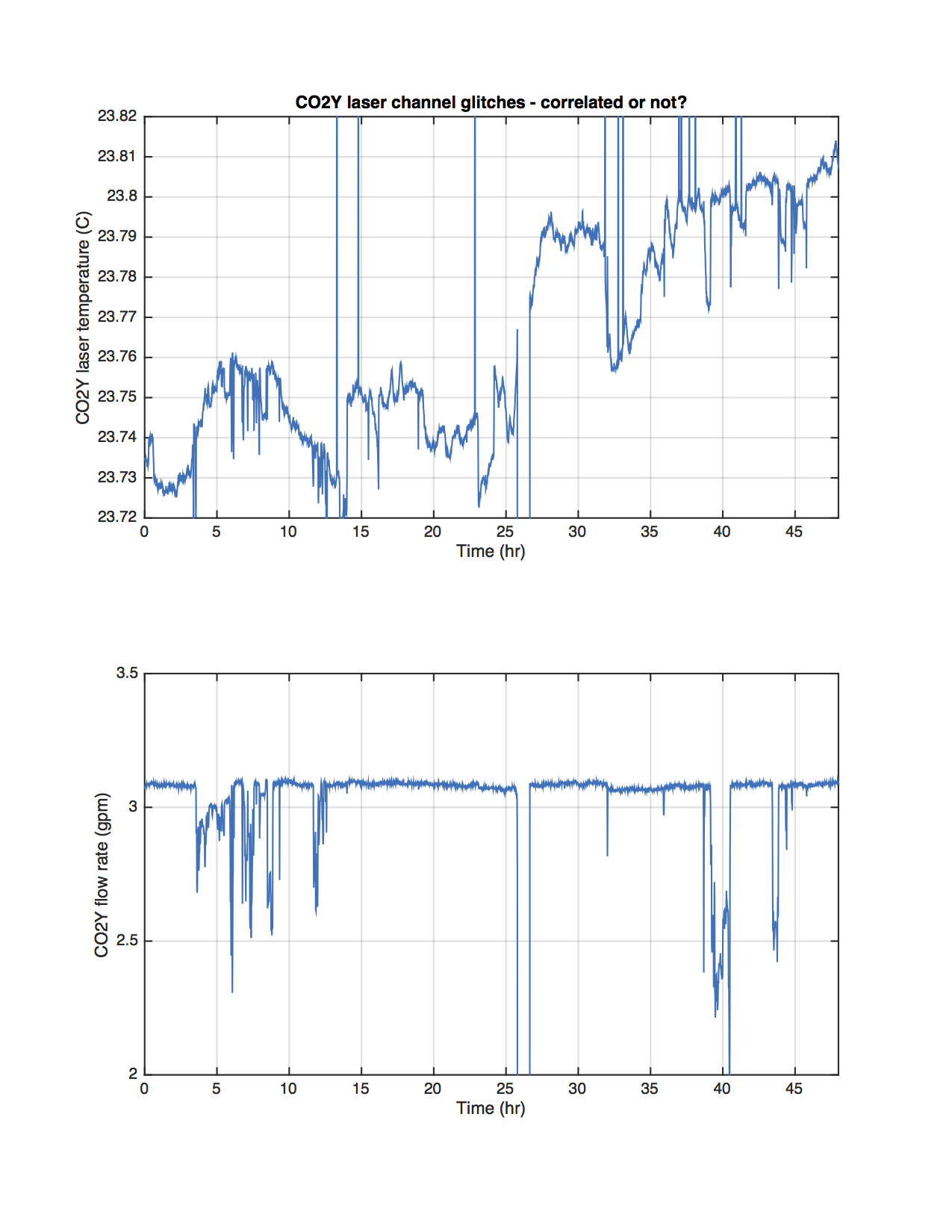

TCSY chiller flow has been an issue throughout the night, but seems to get stable for a few hours at a time.

LOG:

- 08:26 TCSY Chiller flow low alarm

- 10:06 Lockloss

- 10:36 Observing*

- 15:02 Out of Observing to run a2l hoping it might help with the range.

- 15:10 Observing*

- 15:22 Brief CP4 alarm

*All Observing times are with ion pump near BSC8 running.

But don't worry, we are back to Observing at 10:36 UTC.

I haven't seen anything of note for the lockloss. I checked the usual templates, with some screenshots of them attached.

This seems like another example of the SR3 problem. (alog 32220 FRS 6852)

If you want to check for this kind of lockloss, zoom the time axis right around the lockloss time to see if the SR3 sensors change fractions of a second before the lockloss.

See my note in alog 32220, namely that Sheila and I looked again and we see that the glitch is on the T1 and LF coils, which share a line of electronics. The second lockloss TJ started with in this log (12/06) are somewhat unconclusively linked to SR3 - no "glitches" like the first one 12/05, but instead all 6 top mass SR3 OSEMs show motion before lockloss.

Sheila, Betsy

Attached is a ~5 day trend of the SR3 top stage OSEMs. T1 and LF do have an overall step in the min/max of their signals which happened at the time of that lockloss which showed the SR3 glitch (12/05 16:02 UTC)...