State of H1: did make it DC Readout, but issues all along the way

H1 history today:

- laser was restored

- X arm in green had trouble with WFS driving it into lock loss

- Evan, Jenne, and I worked on alignment

- ultimately it reached 0.95, but not the 1.01 that's typical

- descision was to try locking

- H1 making it to engaging ASC with some help from Jenne

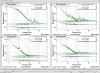

- at or right before DC readout, bounce and roll modes were a problem in every lock

- descission at about 3:30PT (22:30UTC) was to do another initial alignment

Currently:

- Jenne and others in LVEA to look at electronics at HAM6

Improved the whitening overview screen to include error flags.

AS_A_45 whitening chassis was swapped out - alog 30386.

We'll "cheat around" the POP_X situation for tonight by using the one whitening filter that's stuck on, and not the one that we usually use. Marc and Fil will look at this once they've finished debugging the original AS_A_45 chassis.

Redid the dark offsets for all AS diodes.