While Daniel, Sheila and I were looking at some jitter signals, we noticed that the SUM channels that are used to normalize the DC QPDs do not have any lowpassing!! The transmon QPDs already had these more modern parts, but they were never back-propagated to the ASC model.

Also, while the trans QPDs had the right model parts, there aren't actually any lowpasses installed in the filter banks. We'll want to give ourselves ~1Hz cutoffs to eliminate all the high frequency junk, while still allowing the normalization to use the overall power on the QPDs.

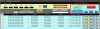

I have now done replaced the old QPD parts with the new ones in the ASC model, and successfully compiled the ASC model. It has not yet been installed / restarted.

I'm almost done making the new screens, and should be done with another ~30 min of work tomorrow.

To be consistent with the transmon QPDs, rather than saving the _SUM channels, we will now be saving the _NSUM_OUT channels. The _SUM channels will get the lowpasses, since they are used for the actual normalization of the pitch and yaw signals, so they're not what we'll want to look at for spectra and other things. The _NSUM channels have the ability to be normalized with the PSL input power, but we are not using that capability (and haven't been with the transmon QPDs either), so really they're just the sum, with no lowpass.

The affected QPDs are:

REFL_A_DC, REFL_B_DC, AS_A_DC, AS_B_DC, POP_A, POP_B, AS_C, OMC_A, OMC_B, OMCR_A, OMCR_B, AS_D_DC(even though we don't use this last one).

Both HWSX and HWSY centroid refernces re-initialized after IFO unlocked for 2 hours. ITMs, BS, and SR3 are all aligned.

16:49 Re-initialized HWSX centroids ref

16:51 Re-initialized HWSY centroids ref