C.Swain, L.Dartez, J.Kissel

Finished characterizing the spare whitening chassis, OMC DCPD S2300004.

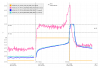

- Transfer functions and residuals shown in:

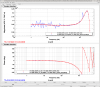

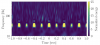

- Output Noise Measurements shown in:

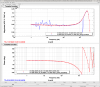

- Noise comparison between S2300004 and S2300003, gathered by Jeff Kissel - S2300003 Noise Data - shown in:

The last two files are text documents containing updated notes on gathering the transfer function measurement data and noise measurement data of the whitening chassis, respectively.

Adding more details to OMC DCPD S2300004 Characterization for better accessibility:

Whitening ON Fit:

| Channel | Fit Zeros | Fit Poles | Fit Gain |

| DCPDA | [0.997] Hz | [9.904e+00, 4.401e+04] Hz | 435752.409 |

| DCPDB | [1.006] Hz | [9.994e+00, 4.377e+04] Hz | 433177.144 |

These results align very well with the goal of a [1:10] whitening chassis.

A difference of [0.3, 0.6] % from the model for the DCPDA and DCPDB fit zeros, respectively, is present. A difference of [0.96, 0.06] % from the model for the DCPDA and DCPDB fit poles, respectively, is present.

As all the differences are below 1%, the precision of the results is satisfactory.

Noise Measurements:

Average ASD for Whitening ON: 300 nV/rtHz

Average ASD for Whitening OFF: 30 nV/rtHz

Average ASD SR785 Noise Floor Level: 20 nV/rtHz

Whitening ON and Whitening OFF noise is consistent with OMC DCPD S2300003 noise measurements. Noise Floor is slightly higher than the S2300003 Noise Floor measurements (~20nV/rtHz compared to ~6nV/rtHz). This difference in noise floor measurements could be caused by a difference in measurement setup when gathering S2300003 data compared to S2300004 data.

Overall:

The measurments of the OMC DCPD S2300004 whitening chassis align well with what is to be expected from a z:p = [1 : (10, 44e3)] Hz whitening filter, with an average ASD noise floor of 300nV/rtHz in the Whitening ON state.

SR785 noise floor level is slightly higher than previously recorded from the OMC DCPD S2300003 whitening chassis but does not contribute to either the Whitening OFF or Whitening ON states, so this difference may effectively be ignored.