J. Kissel, L. Dartez, L. Sun, M. Wade, L. Wade

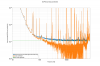

After Louis' beautiful report of the *measured* systematic error in the H1:GDS-CALIB_STRAIN_CLEANED channel (LHO:70705) after the calibration update (LHO:70693) for the decrease to 60 W (LHO:70648), I wondered what the state of our *modeled* systematic error is; for this is the metric of fidelity that the search groups use.

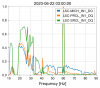

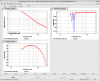

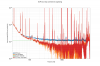

After the IFO thermalizes, the modeled systematic error continues to agree with measured error below ~30 Hz and above ~300 Hz, and the systematic error is low. However, the model continues to be discrepant with the measurement in the 50 to 150 Hz region and large-ish (though not abnormally so) with measured values of ~5-6% , 2 - 3 deg, where the model claims consistency with no error.

As Maddie and Les advertised in LHO:70666, there now exists the following web home page location,

https://ldas-jobs.ligo-wa.caltech.edu/~cal/

where you may now find a *constant* comparison between

:: the *measured* systematic error in the response function, and associated uncertainty -- informed by the coherent transfer function between PCAL calibration lines that are sporadically placed in frequency throughout the DARM sensivity and the final data product, GDS-CALIB_STRAIN (be it "no extension," _NOLINES, or _CLEANED, they all have the same calibration and thus same systematic error).

:: the *modeled* systematic error in the response function, and associated uncertainty -- informed, as in O3, by

- The "reference model" parameter set, in this case that found in report 20230621T211522Z/pydarm_H1.ini

- The time-independent "free parameter" posterior distribution from their MCMC runs to determine

. the optical gain and DARM coupled cavity pole frequency

. the three DARM actuator stage strengths

~cal/archive/H1/reports/20230621T211522Z/

sensing_mcmc_chain.hdf5

actuation_L1_EX_mcmc_chain.hdf5, actuation_L2_EX_mcmc_chain.hdf5, actuation_L3_EX_mcmc_chain.hdf5

- The "unknown, static, frequency-dependent systematic error" from the GPR fits of the inventory of

. sensing function measurement / model (corrected for time-dependence)

. actuation function measurement / model (corrected for time-dependence)

~cal/archive/H1/reports/20230621T211522Z/

sensing_gpr.hdf5

actuation_L1_EX_gpr.hdf5

actuation_L2_EX_gpr.hdf5

actuation_L3_EX_gpr.hdf5

- The time-dependent correction factor (TDCF) *values* for the optical gain and DARM coupled cavity pole frequency the three DARM actuator stage strengths

- The calibration-line-coherence-based uncertainty for the TDCFs

- The statistical uncertainty on the PCAL amplitude, a la G2301163, that's hard-coded into the default ~cal/archive/H1/ifo/

pydarm_uncertainty_H1.ini

under the [pcal] section, as sys_unc = 0.0028 for H1

I attach two (thermalized) times of this comparison from the archive of these plots,

https://ldas-jobs.ligo-wa.caltech.edu/~cal/archive/H1/uncertainty/

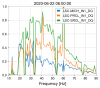

FIRST ATTACHMENT 2023-06-20 11:50 UTC -- IFO at 75W with 2023-05-17 (20230510T062635Z) calibration from LHO:69696

SECOND ATTACHMENT2023-06-22 05:50 UTC -- IFO at 60W with 2023-06-21 (20230621T211522Z) calibration from LHO:70693

Note, that both model vs. measurement in *these* plots are plotted as PCAL / GDS-CALIB_STRAIN -- i.e. a multiplicative transfer function to the GDS-CALIB_STRAIN data stream that would make it more accurate -- vs. in LHO:70705) where it's plotted in terms of GDS-CALIB_STRAIN / PCAL, where one would *divide* that transfer function into the data in order to make it more accurate.

In both of these versions of the measurement, at both 75W and 60W, the model discrepant from the measurements in similar ways between 50 - 150 Hz.

Since we have two clocks (model and measurement), it's tough to prove which one's right -- but I would point my finger to the systematic error model since it's far more complicated in construction than the measurements. Also, Louis calibrated his DTT template with very little effort (*just* the two poles at 1 Hz for PCAL, and 3995 m arm length to convert strain to displacement), and it agrees (if you take the inverse) with Maddie / Les's work calibrating the constant PCAL lines.

Given that the high-frequency end of this comparison agrees (above 500 Hz), my guess that there's something wrong with the modeled actuator.

Further, the *actual* measured systematic error is large (~6% at 80 Hz), the actuator is challenging to model, the actuator is contributing substantially to the response at those frequencies, and my experience from fudging the calibration indicates that one can manipulate the systematic error in those regions by adjusting the actuator gains.

So -- that's where we'll start looking to both get the modeled error to match measured error, and to improve the measured error (assuming the measurement is right).

This usually doesn't work without adjusting the input offset. Also, you would want to test this with the IMC alone, since the AC-coupling is finicky. On the other hand, changing the gain after the servo is engaged is trivial.