Added Beam Rotation Sensor usage info on the BSC ISI Overview. The signals that produce the Active indication are necessary but not sufficient. There are a number of elements that must be inplace (turned on) for implementing the BRS correction. But the correction will be inabled/disabled typically via the MATCH gain. This will eventually be controlled by the guardian.

For operators now, if someone is going to the End Stations and will be anywhere near the BRS, it should be disabled, for now by notifying SEI and otherwise zeroing the gain on the Beam-line Match bank, see 1st attached shot: If the BRS box on the ISI OVERVIEW indicates Active, open the ST1 SENSCOR medm and then the translational MATCH medm and zero the Beam-line gain: X for EndX etc.

For the Seismometer Mass Centering, the need for which is identified by a scheduled FAMIS task result, (see 26426 & 26418,) this task must be preformed with the Seismometer out of the control loop. Bring up the BIO medm accessable from the ISI OVERVIEW, see the 2nd attachment.

For the T240s, an individual BSC SEI Manager is taken to DAMPED. When in the DAMPED state, the T240 needing centering's mass is pushed by pressing the numbered (corner) button in the AutoZ area of the T240 Outputs group. The AutoZ widget should show engaged (going green,) press again to disengage after at least 1 second. The U V W mass positions can be monitored for efficacy of the process in the T240 Inputs group; these numbers should get smaller.

For the floor seismometers (STSs at the moment) doing the CPS sensor correction, the process is more involved as the seismometer is correcting all chambers. All HAM chamber's Sensor Correction MATCH filter gains are zero'd. For BSCs, the Z correction is applied to HEPI while X & Y go to the ISI, so all those must also be zero'd. There is a script for this built by TJ, alog 25936, repeated here:

thomas.shaffer@opsws10:/opt/rtcds/userapps/release/isi/h1/scripts$ ./Toggle_CS_Sensor_Correction.py -h

usage: Toggle_CS_Sensor_Correction.py [-h] ON_or_OFF

positional arguments:

ON_or_OFF 1 or 0 to turn the gain ON or OFF respectively

optional arguments:

-h, --help show this help message and exit

Once the Sensor Correction is all disengaged, the STS masses are centered by pressing the Auto Zero button in the STS-2 area of the Outputs group. This is a momentary and does not have to be pressed again to be disengaged. The masses may also be monitored in the same area.

These instructions will be repeated in a FAMIS conditional task.

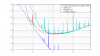

Here are the CoE parameters for the TCS motor controller.

Here is a snapshot of the rotation stage settings screens, as well as updated medm screens for the rotation stage and the readbacks, respectively.

When using the adjust feature, the busy flag of the rotation stage is no longer a good indicator to see, if the laser power has reached its final value. The internal state of the laser power controller is now available in EPICS—as well as a state_busy flag which indicates that the power controller (rather than the rotation stage controller only) is busy.