TITLE: 10/24 Eve Shift: 2330-0500 UTC (1630-2200 PST), all times posted in UTC

STATE of H1: Corrective Maintenance

INCOMING OPERATOR: Tony

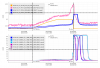

SHIFT SUMMARY: Microseism is still slowly trending up, I got up past MAX_POWER twice, I confirmed the Roll mode damping works at 62Ws.

LOG: No log.

Lock#1

- We sat in ADJ_POWER for a little over an hour at 50Ws as Rahul damped the Roll mode. It seems to be on ETMX. We manual-ed to LOWNOISE_COIL_DRIVERS and later up to LOWNOISE_LENGTH_CONTROL and ETMY started to saturate (it looked to be coming from SUSETMY DAC 1, channels 1, 2, 3. We noticed that the DCPDs were growing as well and PIMON was showing some red. We then went back to ADJ_POWER and lowered the power down to 45Ws, the saturations stopped but the PI kept growing till we lost lock. Lowering the power looks to have made the PI ringup even faster?

- I set the gain of the ETMY roll mode to zero in ISC_LOCK and reloaded it. The Thermalization GRD went into error as we went into LOWNOISE_LENGTH_CONTROL since the SRCL1 offset was not at the nominal, I set the nominal back and reloaded it and it did not fix the error.

Lock#2

- 02:42 UTC IA

- 03:48 UTC I got back to ADJ_POWER and brought us down from 62 to 40W

- 04:17 UTC Shortly after I brought us back up to 62 at Sheilas' suggestion and tried Rahuls new Roll mode damping settings and they seemed to work at MAX_POWER, after 6 or 7 minutes we tried to go to LOWNOISE_LENGTH_CONTROL. We lost lock shortly after during TRANSITION_FROM_ETMX. Sheila accepted the filter changes for the new Roll mode settings.

- 04:41 UTC Holding in DOWN