TITLE: 06/22 Owl Shift: 07:00-15:00 UTC (00:00-08:00 PST), all times posted in UTC

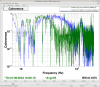

STATE of H1: Observing at 139Mpc

CURRENT ENVIRONMENT:

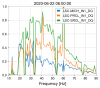

SEI_ENV state: CALM

Wind: 6mph Gusts, 4mph 5min avg

Primary useism: 0.01 μm/s

Secondary useism: 0.06 μm/s

QUICK SUMMARY:

Inherited H1 which was already locked for about 2 hours.

10:11 UTC GRB-Short Candidate E412714 Verbal Alarm

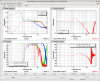

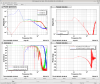

14:00 UTC Increase in SensMon Range:

Jenne Called and we spoke about making some changes to increase the sensitivity.

I did a simple caget to find out what the values of H1:LSC-SRCLFF1_GAIN & H1:LSC-SRCLFF1_TRAMP were and a caput to change the gain to 2.1.

After the change I saw a noticable increase in SENSMON Range.

IFO Current Status : NOMINAL_LOW_NOISE & OBSERVING with a range of 140.6 Mpc

14:35 UTC Superevent Candidate S230622ba